An Introduction to the Programmable WAF of OpenResty Edge

Web Application Firewalls (WAFs) are critical to any security architecture. However, the inherent tension between their performance overhead and the flexibility of their rules has long presented a challenging trade-off in architectural design. This article explores a new paradigm: what can be achieved when a WAF transcends its role as a fixed-function security component and evolves into a programmable edge security layer?

We will delve into how OpenResty Edge leverages its Domain Specific Language (EdgeLang) and advanced compilation optimization techniques to transform complex security rules into high-performance, native gateway instructions. This approach not only delivers significant performance advantages in benchmark tests but, more importantly, empowers developers to define security policies, allowing for deep integration with core business logic. Through concrete technical implementations, performance data, and architectural principles, this article will demonstrate how a high-performance, programmable WAF can evolve from a passive defense mechanism into an intrinsic capability within the enterprise application delivery pipeline.

High-Performance Programmatic WAF

Programmatic Security: EdgeLang

Traditional WAFs are often constrained by static configuration files or inefficient scripts. OpenResty Edge introduces EdgeLang, our proprietary domain-specific language (DSL) meticulously designed for high-performance gateways. It goes beyond mere rule configuration, offering a language that is significantly more concise and efficient than manually written Lua code.

Unprecedented Extensibility: EdgeLang is not a siloed solution. It boasts powerful interoperability, enabling direct calls to custom Lua modules and code, thereby allowing you to reuse existing business logic and scripts. Furthermore, EdgeLang comes with an extensive suite of built-in pre-compiled libraries and modules, encompassing common security protection, data processing, and network operation functionalities. This design not only ensures the lightweight deployment of OpenResty Edge Nodes but also empowers developers with ample extension capabilities, allowing you to seamlessly integrate and orchestrate complex security policies within your defense infrastructure using EdgeLang.

Unleashing Performance Beyond Hand-coded Logic (Compiler Magic): You might assume flexibility comes at the cost of performance, but with EdgeLang, the opposite is true.

- Optimized Code Generation: The EdgeLang compiler transforms your rules into highly optimized Lua code, specifically designed for execution on the gateway server. Leveraging advanced algorithmic optimizations and sophisticated code generation strategies, it typically runs faster than hand-written Lua code.

- Global Rule Optimization: Our compiler employs sophisticated global optimization techniques. Instead of executing rules sequentially, it comprehensively integrates and optimizes all rules, effectively simplifying complex interactions and boosting efficiency.

Advanced Matching Techniques With the following optimizations, the scale of your rule set no longer presents a performance bottleneck:

- Regex Merging: The compiler consolidates all regular expression rules into a single, comprehensive Deterministic Finite Automaton (DFA). This ensures that, regardless of the number of rules, the system only needs to scan request data once to identify all matching rules and their corresponding positions.

- String Trees: All constant string prefix and suffix patterns are combined into a unified, highly efficient tree-based data structure to accelerate the lookup process.

Fine-grained Sensitivity Control

Addressing the pervasive industry challenge of WAF false positives, we offer a multi-tiered sensitivity adjustment mechanism, empowering operations teams to adapt flexibly to diverse business scenarios.

Scenario-Based Adjustment Strategies:

- Security Drill/Penetration Testing Phase: Elevate sensitivity to strict mode, prioritizing security even if it means increased blocking.

- Business Peak Period: Lower sensitivity to balanced mode, minimizing disruption to legitimate users.

- Routine Operations Phase: Utilize standard mode, striking the optimal balance between security and availability.

By adjusting sensitivity levels in real-time, operations teams can swiftly respond across various operational stages, thereby minimizing the business impact of false positives. While not a perfect solution, it stands as the most practical strategy available today.

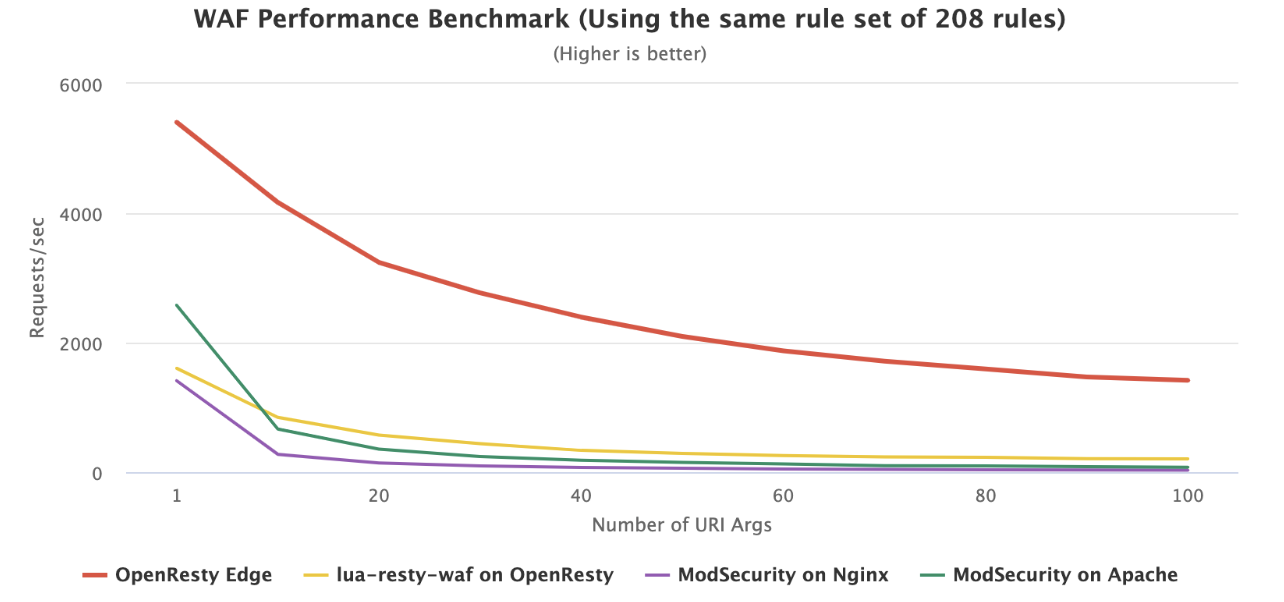

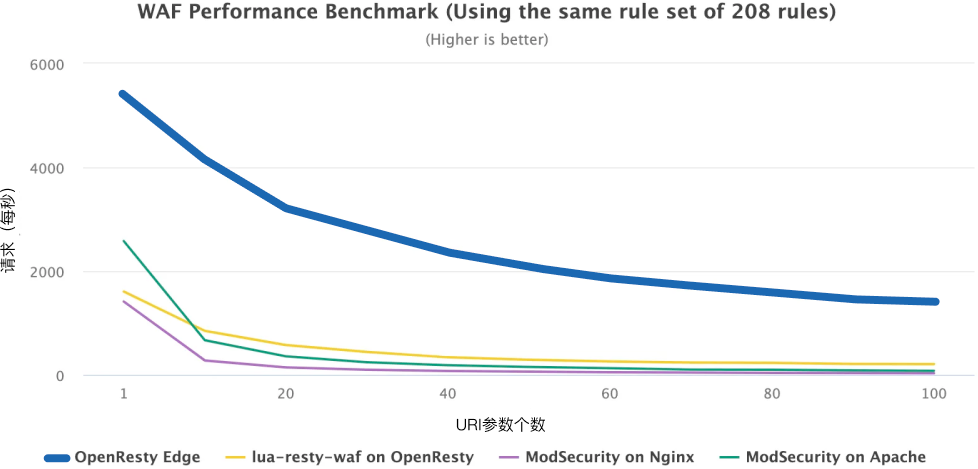

Overwhelming Performance

The data doesn’t lie. We simulated a highly realistic attack scenario: 1 Worker process, utilizing 1 CPU core, with 208 rules loaded. We measured the system’s Requests Per Second (RPS, y-axis) as the number of URI parameters (URI Args, x-axis) increased.

- Dominance from the Start: In the simplest scenario (1 URI parameter), OpenResty Edge’s throughput (~5300 RPS) is already 3.7 times that of ModSecurity for Nginx (~1400 RPS).

- A Chasm in Resilience: As request complexity grows, ModSecurity’s performance collapses exponentially. When URI parameters reach 20, the traditional WAF is virtually “choked” (approaching 150 RPS), with server resources completely exhausted.

- Unmatched Endurance: Even under an extreme load of 100 parameters, OpenResty Edge still maintains around 1500 RPS—a figure that surpasses ModSecurity’s performance even under its lightest load.

This means:

User Experience: Speed drives retention.

Given equivalent hardware, OpenResty Edge delivers processing capabilities many times, even tens of times, greater than its competitors. This holds true even with all 208 security rules fully enabled. Consequently, you no longer have to compromise between security and speed—users benefit from banking-level security protection while still enjoying an ultra-fast and seamless loading experience.

Cost-effectiveness: Significant unit economics.

OpenResty Edge achieves the same throughput with just 1 CPU core that competitors require several, or even a dozen, cores to support. For cloud-native enterprises, this translates to a drastic reduction in cluster scale and a substantial decrease in AWS/GCP/Azure monthly expenditures. By handling more traffic with fewer cores, technological advantages are directly converted into tangible financial profits.

Building a WAF Defense System from Scratch

Step 1: Establishing a Global Security Foundation

Before configuring protection for specific applications, we recommend first establishing a set of unified global WAF rules. This provides a foundational security baseline for all your services, capable of efficiently intercepting common, widespread attacks. This is the starting point for building your defense system.

Step 2: Enabling Dedicated Protection for Core Applications

Once global rules are established, the next step is to enable WAF functionality for your most important applications. Only after enabling it will the rules you set (whether global or application-specific) truly take effect and begin safeguarding your applications. Enable WAF for Applications.

Step 3: Granular Whitelisting to Prevent Business Disruption

After enabling WAF protection, legitimate business requests may sometimes be incorrectly blocked (false positives). To ensure business continuity and stability, you need to set up a whitelist to precisely exclude trusted requests from the blocking rules.

How to Set Application WAF Whitelist

Step 4: Achieving Ultimate Flexibility with Custom Rules

For users with complex business scenarios or advanced security needs, standard WAF rules may not be flexible enough. By using Edgelang, you can write dynamic, fine-grained WAF rules based on any request parameters (such as Header, Cookie, URL, etc.), enabling highly customized protection logic.

Using Edgelang to Define WAF Rules

By leveraging these guidelines, you can build a WAF defense system that is comprehensive (from global to local), adaptable (from general to customized), powerful, and flexible.

Beyond WAF: A Unified Architecture

Traditional cybersecurity approaches often view WAF (Web Application Firewall) as an isolated perimeter defense. However, in today’s complex business environments, relying solely on a single “security checkpoint” is no longer sufficient. The design philosophy of OpenResty Edge precisely embodies this idea, building a converged platform that integrates Private CDN, API Gateway, and WAF. It tightly integrates WAF’s capabilities with CDN and API Gateway.

Let’s explore the power of this unified approach through a vivid analogy.

Layer 1: CDN — “The Highway and Traffic Hub”

CDN (Content Delivery Network) represents the outermost layer of the entire architecture and is the closest point of contact for global users. Its core responsibilities are “acceleration” and “traffic distribution.”

- Position: The primary entry point for traffic, the initial point of user access.

- Responsibilities:

- Acceleration and Caching: It acts like globally distributed “edge caches,” storing static resources such as images and CSS. These resources are delivered directly to users, eliminating the need to repeatedly fetch them from the distant “origin server” (i.e., origin pull), significantly boosting access speed.

- Traffic Scrubbing: Leveraging its massive bandwidth resources, CDN can absorb and mitigate large-scale L3/L4 layer DDoS attacks, much like a floodgate, thereby safeguarding the availability of backend services.

- Relationship: In this architecture, the CDN serves as the WAF’s “foundation” and “first line of defense,” absorbing the initial wave of volumetric attacks and enabling more granular security detection.

Layer 2: WAF — The “Airport Security Checkpoint”

After traffic undergoes initial distribution and acceleration by the CDN, it proceeds to the detailed security inspection phase handled by the WAF (Web Application Firewall).

- Location: Positioned immediately after the CDN, or directly embedded within CDN nodes, and always before the API Gateway.

- Responsibilities:

- Security Inspection: The WAF’s primary mission is straightforward: to scrutinize every request for potential threats. It doesn’t concern itself with the destination (which business service you’re trying to reach), but solely with the safety and integrity of the request itself. It rigorously inspects requests for “bombs” (SQL injection), “knives” (XSS attacks), or “forged documents” (malicious cookies).

- Relationship: The WAF serves as a critical line of defense for business security, ensuring that only legitimate and harmless requests are permitted to proceed to downstream business processes.

Layer 3: API Gateway — The “Boarding Gate & Control Tower”

“Passengers” (requests) that have successfully cleared the security inspection will eventually arrive at the API Gateway, which acts as the primary “entry point” to specific business systems.

- Location: Situated after the WAF, it functions as the unified ingress for all backend microservices.

- Responsibilities:

- Authentication (Auth): “Who are you?” — Verifies the identity of the request using mechanisms such as OAuth/JWT.

- Routing (Route): “Which service do you need?” — Based on the request content, it precisely directs the request to the appropriate backend microservice.

- Fine-grained Control (Quota): “How many times can your ticket access the VIP lounge?” — Implements detailed traffic management and quota enforcement for different users or APIs.

- Relationship: The API Gateway acts as the central orchestration hub for business logic, managing request identity, routing, and advanced control.

When these three distinct layers are no longer independent, sequential systems, but are instead integrated within a single software solution, the architectural advantages become evident in several key areas:

Minimizing Network Latency Between Components: In a distributed architecture, traffic often undergoes multiple network hops between various devices or services like CDNs, WAFs, and API Gateways. Each hop introduces additional network I/O overhead and increased latency. In a unified model, all processing (caching, security detection, routing) is completed within the context of a single Worker Process. This means data processing avoids traversing network boundaries, thereby eliminating this inherent source of latency, which is particularly critical for latency-sensitive applications.

Simplified Technology Stack and Operational Model: Managing multiple heterogeneous systems incurs significant operational overhead, including independent configuration, monitoring, upgrading, and troubleshooting. A unified technology stack means that the operations team only needs to manage and maintain one platform to oversee the full lifecycle of edge traffic, thereby reducing the system’s overall complexity and labor costs.

The core of a converged architecture lies in deep integration, not merely piling on features. It unifies capabilities such as traffic processing and security protection on a shared data plane, inherently optimizing the data path and greatly simplifying the operational model.

From Defensive Tools to Core Assets

By building your security infrastructure on OpenResty Edge, you gain not just a high-performance WAF, but a programmable edge security layer deeply integrated with your business logic:

- By compiling security rules into native code via

EdgeLang, even the most complex protection logic will not become a performance bottleneck, ensuring the rapid responsiveness of core business operations. - Unifying security, gateway, traffic management, and other functions within a programmable software stack, enabling more agile business with fewer components and lower operational complexity.

- Breaking free from the constraints of static rule sets, you can write dynamic security policies that are aware of business context, upgrading defense from reactive to proactive strategies.

Future application competition is no longer just about “richer features,” but about “faster, safer, and more reliable delivery.” OpenResty Edge’s programmable security is the new “business assurance engine” for this era. Choosing it may seem like just a technology stack update, but it represents your first step toward transforming security from an “external constraint” into an “intrinsic capability.”

What is OpenResty Edge

OpenResty Edge is our all-in-one gateway software for microservices and distributed traffic architectures. It combines traffic management, private CDN construction, API gateway, security, and more to help you easily build, manage, and protect modern applications. OpenResty Edge delivers industry-leading performance and scalability to meet the demanding needs of high concurrency, high load scenarios. It supports scheduling containerized application traffic such as K8s and manages massive domains, making it easy to meet the needs of large websites and complex applications.

If you like this tutorial, please subscribe to this blog site and/or our YouTube channel. Thank you!

About The Author

Yichun Zhang (Github handle: agentzh), is the original creator of the OpenResty® open-source project and the CEO of OpenResty Inc..

Yichun is one of the earliest advocates and leaders of “open-source technology”. He worked at many internationally renowned tech companies, such as Cloudflare, Yahoo!. He is a pioneer of “edge computing”, “dynamic tracing” and “machine coding”, with over 22 years of programming and 16 years of open source experience. Yichun is well-known in the open-source space as the project leader of OpenResty®, adopted by more than 40 million global website domains.

OpenResty Inc., the enterprise software start-up founded by Yichun in 2017, has customers from some of the biggest companies in the world. Its flagship product, OpenResty XRay, is a non-invasive profiling and troubleshooting tool that significantly enhances and utilizes dynamic tracing technology. And its OpenResty Edge product is a powerful distributed traffic management and private CDN software product.

As an avid open-source contributor, Yichun has contributed more than a million lines of code to numerous open-source projects, including Linux kernel, Nginx, LuaJIT, GDB, SystemTap, LLVM, Perl, etc. He has also authored more than 60 open-source software libraries.