Anatomy of a 15x Performance Drop: A 90k to 6k QPS Root Cause Analysis with OpenResty XRay

A microsecond-latency reverse proxy component, under specific conditions, experienced a 93% performance degradation, while conventional monitoring metrics misleadingly reported “all normal.” This represents a critical observability blind spot and a precursor to systemic cascading failures. This article details a deep optimization effort that transformed a 15x performance deficit into an anticipated 10% overhead. Beyond mere fixes, our focus is on how OpenResty XRay can be used to uncover the underlying bottlenecks related to compiler behavior and connection management. In modern high-concurrency architectures, a deep command of engineering intricacies directly defines the stability limits of infrastructure.

The 93% Performance Drop: Uncovering the Observability Blind Spot

In any mature engineering team, performance validation is typically a deterministic and well-defined process. Especially for core infrastructure components like gateways, their architecture (Client → OpenResty Gateway → Upstream) is a thoroughly validated and mature pattern. Theoretically, a gateway performing only lightweight forwarding should exhibit predictable and extremely low performance overhead.

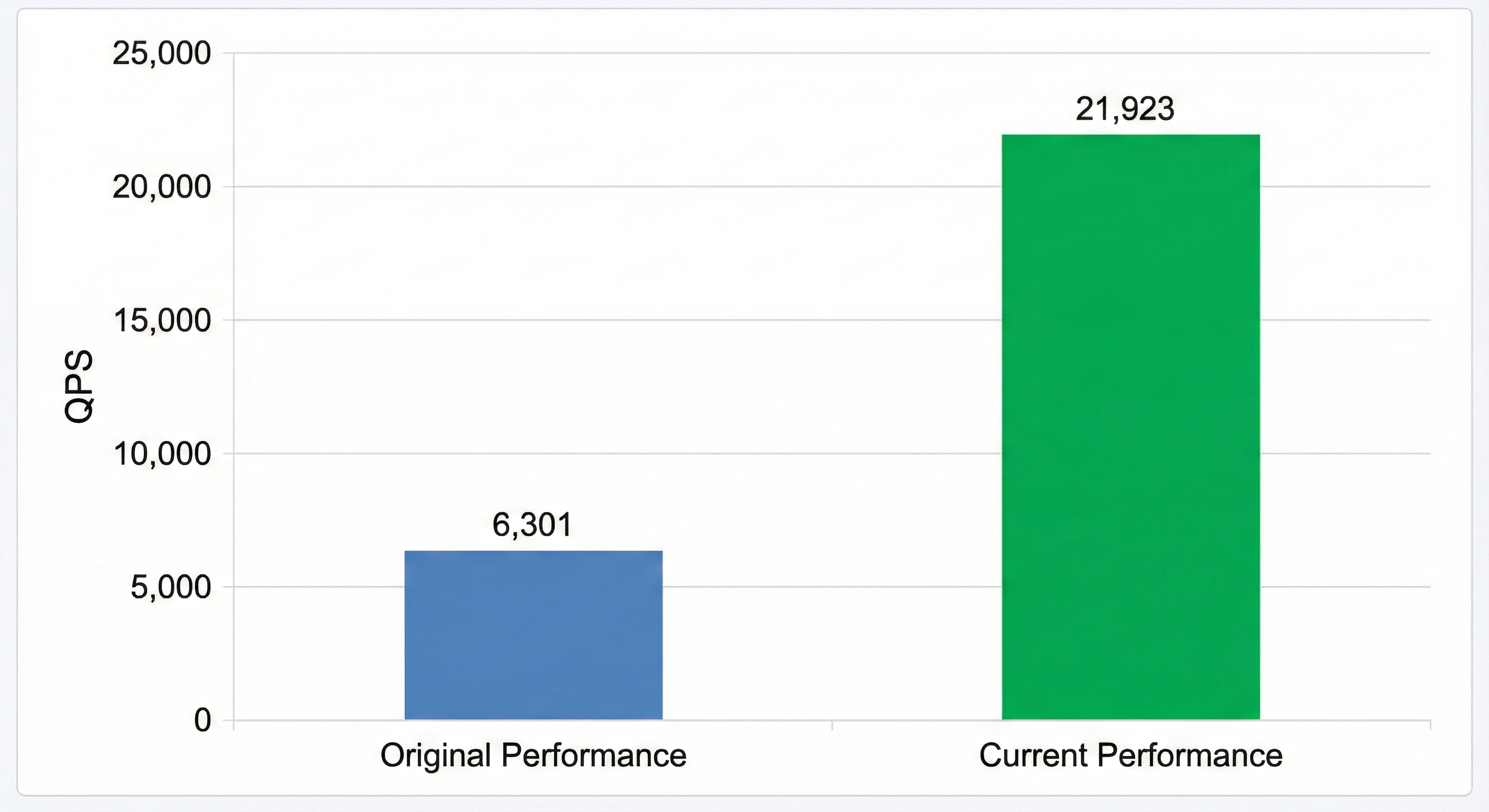

However, during benchmark testing of the new gateway version, we encountered a drastic performance degradation:

- Upstream Service Baseline (Direct Connection Test): 94,706 QPS

- New Gateway (Reverse Proxy): 6,301 QPS

An astonishing 93% of performance inexplicably vanished. A seemingly simple reverse proxy introduced a nearly 15-fold performance reduction. The system appeared stable on the surface: there were no error logs, and the configuration aligned with conventional expectations. Clearly, the root cause of the problem lay hidden beyond our usual observability.

OpenResty XRay Uncovers Connection Reuse Issues

We utilized OpenResty XRay for CPU performance analysis on our gateway, generating a flame graph using the lj-c-on-cpu tool:

The CPU flame graph prominently displayed two unusually wide sections: connect() and close() system calls.

In high-performance network services, these operations should typically be almost invisible “background noise.” Their abnormal prominence pointed to a critical issue: upstream connections were not being reused. Every incoming request was triggering a brand new TCP handshake and teardown. In high-concurrency scenarios, this amounted to a “connection storm,” where the kernel spent excessive time establishing and terminating connections instead of processing actual data.

With the comprehensive call stack provided by OpenResty XRay, we quickly pinpointed the root cause:

- Connect Path:

ngx_http_proxy_handler→ngx_http_upstream_connect→connect() - Close Path:

ngx_http_upstream_finalize_request→ngx_close_connection→close()

The diagnosis was clear: we had overlooked the keepalive directive in our upstream configuration block. This was a seemingly minor oversight, yet one capable of triggering a cascading failure in a production environment.

We immediately enabled upstream keepalive. The results were instantaneous: Performance surged from 6,301 QPS to 21,923 QPS, a 3.48x improvement!

The spikes for connect() and close() have now vanished. The first performance bottleneck was resolved, but our optimization journey continues.

Comparative Analysis Reveals Compilation Option Issues

Despite a significant recovery in performance, the intuition of experienced engineers suggested that the situation was not that simple. By comparing the current version’s performance (21,923 QPS) with the previous stable version (24,115 QPS), we discovered that a performance gap of approximately 10% still persists.

Where does this 10% gap originate? While not as alarming as a 15x difference, for infrastructure striving for ultimate performance, any unexplained degradation is unacceptable.

- The flame graph of the current version is “deeper”: The call stack levels are significantly more numerous than in the stable version.

- Increased frequency of specific function calls: For instance, functions like

ngx_http_gunzip_body_filterappear frequently in the call chain, whereas they are almost entirely absent in the stable version.

Examining the code for ngx_http_gunzip_body_filter:

static ngx_int_t

ngx_http_gunzip_body_filter(ngx_http_request_t *r, ngx_chain_t *in)

{

int rc;

ngx_uint_t flush;

ngx_chain_t *cl;

ngx_http_gunzip_ctx_t *ctx;

ctx = ngx_http_get_module_ctx(r, ngx_http_gunzip_filter_module);

if (ctx == NULL || ctx->done) {

return ngx_http_next_body_filter(r, in);

}

}

Theoretically, Nginx/OpenResty’s filter chain makes extensive use of tail calls. When compiled with optimizations, these calls should be eliminated by the compiler, resulting in virtually no additional overhead. However, the current flame graph strongly suggests that compiler optimization may have failed.

Impact of Compiler Options on Instruction Execution Performance

Investigating this lead, we examined two versions of the build scripts and discovered:

The current development build is using the -O0 optimization flag.

The reason was that during development, compiler optimizations were temporarily disabled to facilitate debugging a specific issue. However, after completing feature development, we neglected to revert to optimized compilation settings.

-O0 implies:

- Function inlining is disabled - Small function calls cannot be inlined

- Tail call optimization is ineffective - Leading to deeper call stacks

- Redundant code is not eliminated - Resulting in more unnecessary instructions

- Low register usage efficiency - Causing increased memory access

These seemingly minor differences in compiler behavior, when accumulated, precisely cost us 10% of our performance.

We restored the compilation options to the standard -O2, rebuilt, and deployed.

The results showed complete performance recovery, on par with the stable version. At this point, both performance issues were completely resolved.

Engineering Post-mortem of a 15x QPS Discrepancy

This performance investigation, which saw QPS drop from 94K to 6K, exposed two profound challenges in modern complex systems:

The Drift of “Hidden Context”: Performance isn’t solely determined by code logic; it also hinges on the “hidden context” of the build and runtime environments—such as compilation options, kernel parameters, and runtime configurations. Changes in these contexts are often imperceptible during Code Review but can lead to catastrophic consequences. The absence of

keepaliveconfiguration and the-O0compilation option in this case serve as prime examples.Overcoming Observational Blind Spots:: When issues delve into the kernel and compiler layers, traditional logging and metrics often fall silent. This case demonstrates that, to tackle system-level bottlenecks, we require a tool with deep visibility, like OpenResty XRay:

- End-to-End Sampling: Tracing from the application layer down to the kernel to quickly identify high-level bottlenecks (e.g., connection storms).

- Anomaly Pattern Recognition: Rapidly identifying unexpected

connect/closepaths using flame graphs. - Baseline Comparison: Pinpointing subtle, microscopic differences (e.g., compiler behavior).

Performance problems are rarely clear-cut bugs; instead, they often manifest as subtle efficiency degradation. In complex system architectures, relying solely on intuition or guesswork is inefficient. With OpenResty XRay, we can establish comprehensive observability from application logic to kernel calls, empowering us to measure, analyze, and optimize those “gray areas” hidden deep within the system, thereby ensuring long-term stability and exceptional performance.

What is OpenResty XRay

OpenResty XRay is a dynamic-tracing product that automatically analyzes your running applications to troubleshoot performance problems, behavioral issues, and security vulnerabilities with actionable suggestions. Under the hood, OpenResty XRay is powered by our Y language targeting various runtimes like Stap+, eBPF+, GDB, and ODB, depending on the contexts.

About The Author

Yichun Zhang (Github handle: agentzh), is the original creator of the OpenResty® open-source project and the CEO of OpenResty Inc..

Yichun is one of the earliest advocates and leaders of “open-source technology”. He worked at many internationally renowned tech companies, such as Cloudflare, Yahoo!. He is a pioneer of “edge computing”, “dynamic tracing” and “machine coding”, with over 22 years of programming and 16 years of open source experience. Yichun is well-known in the open-source space as the project leader of OpenResty®, adopted by more than 40 million global website domains.

OpenResty Inc., the enterprise software start-up founded by Yichun in 2017, has customers from some of the biggest companies in the world. Its flagship product, OpenResty XRay, is a non-invasive profiling and troubleshooting tool that significantly enhances and utilizes dynamic tracing technology. And its OpenResty Edge product is a powerful distributed traffic management and private CDN software product.

As an avid open-source contributor, Yichun has contributed more than a million lines of code to numerous open-source projects, including Linux kernel, Nginx, LuaJIT, GDB, SystemTap, LLVM, Perl, etc. He has also authored more than 60 open-source software libraries.