When JSON Becomes the Invisible Bottleneck in OpenResty Services

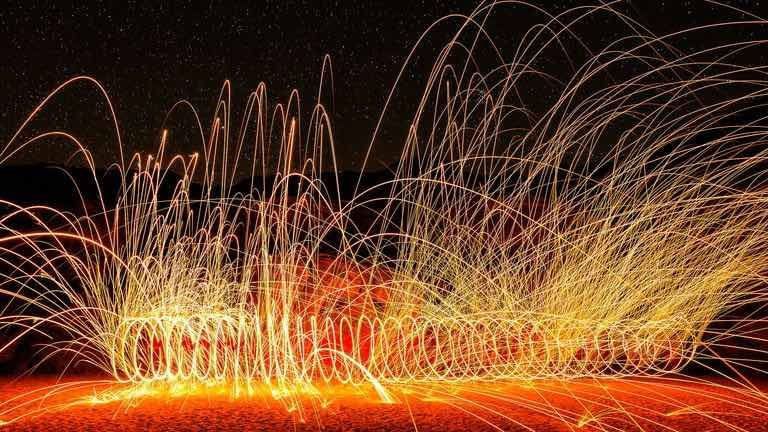

In the world of OpenResty performance tuning, one type of overhead exists like “dark matter”: it is ubiquitous, yet frequently falls off our troubleshooting radar. That culprit is JSON encoding and decoding. Whether acting as an API gateway parsing upstream responses, assembling complex request bodies in the business logic layer, or retrieving configurations from shared memory (lua_shared_dict), cjson.encode and cjson.decode permeate nearly every request path. While the CPU time of a single call is measured in microseconds, these tiny costs stack up rapidly under high-concurrency peaks, eventually manifesting as unavoidable “hot zones” on a flame graph.

Performance bottlenecks rarely explode out of nowhere. A more typical scenario involves CPU utilization climbing in lockstep with linear growth in business traffic. Teams often reflexively optimize SQL queries, tune connection pools, or refactor business logic, only to encounter diminishing returns. Only then do engineers begin to scrutinize those persistent JSON hot zones that have been hiding in plain sight.

Where Exactly Is the Performance Ceiling?

Recognizing JSON as a bottleneck is merely the first step. The more critical question is: Is this bottleneck a result of engineering implementation, or have we hit the fundamental limit of our infrastructure?

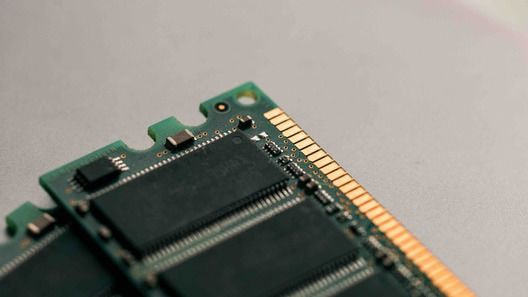

In the OpenResty ecosystem, lua-cjson is the de facto standard. Since it is embedded in the LuaJIT runtime as a C extension, its throughput ceiling is dictated by the underlying C implementation and the overhead of LuaJIT’s FFI and interaction mechanisms. Regardless of how much you streamline your Lua-level code, the performance ceiling remains fixed as long as the underlying encoding/decoding efficiency stays stagnant.

From an engineering perspective, we must accept a hard truth: No amount of “clever workarounds” at the business layer can bypass the performance red line established by the foundational infrastructure.

Common “Workaround” Strategies and Their Limitations

Faced with the CPU overhead imposed by JSON processing, seasoned engineers typically explore several workarounds. However, each path comes with its own set of trade-offs.

Caching serialization results is often the first solution that comes to mind. This approach yields immediate results for configuration data or highly repetitive JSON payloads. In reality, however, most request and response bodies are dynamic. This leads to poor cache hit rates, while the caching logic itself adds complexity to state management and data consistency.

Minimizing encoding/decoding frequency represents another form of architectural discipline. A common practice is to pass Lua tables internally and only perform serialization at the system’s boundaries. While this is a solid pattern, it fails to address the root cause: external API interactions and cross-system data exchanges. These boundary-level operations are essential requirements that cannot be bypassed.

As for horizontal scaling, it is the simplest yet most expensive route. While it may alleviate immediate pressure, it does nothing to improve single-core execution efficiency. As traffic doubles, costs scale linearly—hardly an elegant engineering solution.

In essence, these strategies are designed to “circumvent the bottleneck” rather than “raise the ceiling.”

Why Custom Development or “Hacking” Existing Libraries is a Bad Idea

Teams with deep technical expertise often consider a different route: “Can we optimize lua-cjson ourselves, or switch to a third-party library that claims to be faster?”

While technically enticing, the engineering challenges involved are frequently underestimated. lua-cjson is deeply integrated with LuaJIT’s runtime characteristics. Extracting more performance out of it requires more than just C proficiency; it demands an intimate understanding of LuaJIT’s memory model, garbage collection (GC) behavior, and FFI calling conventions. This typically falls outside the core expertise of most application development teams.

Furthermore, there is the “hidden cost” of long-term maintenance. Maintaining a fork of a low-level library means taking full responsibility for security patches and upstream compatibility. For most teams focused on development velocity, this is rarely an investment with a justifiable ROI.

Seeking a More Direct Breakthrough

If the bottleneck resides at the infrastructure layer, the ideal solution should be addressed at that same level.

A more strategic approach is to identify a higher-performance alternative that is fully API-compatible to replace the existing lua-cjson directly—without touching a single line of business logic.

The appeal of this “transparent replacement” is its immediate path to value: performance gains translate directly into lower CPU utilization without the need for code refactoring or adding architectural complexity. The only remaining question is: does an implementation exist that delivers both extreme performance and flawless compatibility with the OpenResty ecosystem?

Infrastructure-Level Optimization: jit.cjson

Leveraging a deep understanding of LuaJIT and lua-cjson, OpenResty Inc. has released a systematically optimized version: jit.cjson.

Its value proposition is straightforward: it serves as a drop-in replacement for lua-cjson. With a fully compatible API and highly consistent behavior, official benchmarks under specific workloads show encoding performance up to 18x that of other open-source Lua-cjson modules, and decoding performance up to 6x. It also adds capabilities such as JSON comments and allowing trailing commas in arrays and objects.

As engineers, we treat those multipliers as upper-bound references. Actual gains will vary depending on data structure complexity, the ratio of serialization to deserialization, and concurrency levels. However, for services where JSON processing has become a performance bottleneck, gains on this scale are significant enough to fundamentally alter infrastructure scaling decisions.

Why the Official Solution is the Safer Bet

First, the global perspective. As the creators of the entire ecosystem, OpenResty Inc. possesses an unparalleled understanding of the boundaries where the LuaJIT runtime interacts with underlying C libraries. Their optimizations aren’t just isolated patches; they are the result of a profound understanding of the entire execution stack.

Second, a transparent approach to performance data. The official numbers separate encoding from decoding (“up to 18x” versus “up to 6x”) instead of folding everything into a single headline multiplier, which aligns with sound engineering logic. Any marketing that promises fixed gains regardless of the actual workload is disingenuous. This level of transparency allows architects to manage expectations effectively.

Third, controllable risk boundaries. The primary cost of adopting a new library isn’t the learning curve—it’s regression testing. Because

jit.cjsonmaintains full API compatibility, existingrequire "cjson"calls remain untouched. This downgrades the implementation risk from “massive code refactoring” to a simple “canary configuration change.”Fourth, commercial-grade engineering. Distribution via standard package managers, continuous security updates, and comprehensive support for both

httpandstreamcontexts signal a long-term, industrial-grade product—not just an experimental optimization demo tossed onto GitHub.

Minimal Adoption Cost

jit.cjson remains API-compatible with cjson, so upgrading may not require changes to your existing business code. For concrete replacement steps, see the jit.cjson manual.

This means you can reap significant performance gains without touching legacy business code that has been running stably for years. This “low-friction, high-reward” profile is precisely what senior performance engineers look for when evaluating new technologies.

Summary

In OpenResty services, JSON serialization and deserialization are often overlooked CPU sinks. As the room for optimization at the application logic level diminishes, the real performance ceiling is frequently dictated by these low-level components. The value proposition of jit.cjson is straightforward: it boosts encoding and decoding efficiency without requiring a single change to your business code.

If you are evaluating ways to reduce JSON-related CPU overhead, the following documentation can help you determine if jit.cjson is the right fit for your environment:

Covers installation procedures, dependencies, and integration requirements for the OpenResty runtime.

If your current system is experiencing the following challenges:

JSON operations consistently appear as major hotspots on your flame graphs.

Horizontal scaling mitigates pressure, but costs are scaling linearly with traffic.

Previous attempts to optimize underlying libraries led to issues with correctness or high maintenance overhead.

Feel free to click “Contact Us” at the bottom right. Our engineering team is available to provide detailed performance assessments and deployment guidance. You can also explore other proprietary libraries from OpenResty Inc., all of which are engineered for high-performance execution, predictable resource modeling, and production-grade stability.

About The Author

Yichun Zhang (Github handle: agentzh), is the original creator of the OpenResty® open-source project and the CEO of OpenResty Inc..

Yichun is one of the earliest advocates and leaders of “open-source technology”. He worked at many internationally renowned tech companies, such as Cloudflare, Yahoo!. He is a pioneer of “edge computing”, “dynamic tracing” and “machine coding”, with over 22 years of programming and 16 years of open source experience. Yichun is well-known in the open-source space as the project leader of OpenResty®, adopted by more than 40 million global website domains.

OpenResty Inc., the enterprise software start-up founded by Yichun in 2017, has customers from some of the biggest companies in the world. Its flagship product, OpenResty XRay, is a non-invasive profiling and troubleshooting tool that significantly enhances and utilizes dynamic tracing technology. And its OpenResty Edge product is a powerful distributed traffic management and private CDN software product.

As an avid open-source contributor, Yichun has contributed more than a million lines of code to numerous open-source projects, including Linux kernel, Nginx, LuaJIT, GDB, SystemTap, LLVM, Perl, etc. He has also authored more than 60 open-source software libraries.