How OpenResty and Nginx Allocate and Manage Memory

Our OpenResty® open source web platform is known for its high execution speed and also small memory footprint. We have users running complex OpenResty applications inside embedded system devices like robots. And people have been observing significant memory usage reduction when they migrate applications from other technical stacks like Java, NodeJS, and PHP. But still, sometimes we may want to optimize the memory usage of a particular OpenResty application which incurs large memory usage and/or leaking memory. Such applications may contain bugs or deficiencies in its Lua code, Nginx configurations, and/or 3rd-party Lua libraries and Nginx modules.

To effectively debug and optimize memory usage or leaking issues, it is always beneficial to have a good understanding of how OpenResty, Nginx, and LuaJIT allocate and manage memory under the hood. Our commercial product, OpenResty XRay, can also automatically analyze and troubleshoot a wide range of memory usage issues in any OpenResty applications without any modifications or compromises, even in an online production environment. This post is the first of a series of articles explaining memory allocations and management in OpenResty, Nginx, and LuaJIT, with real world sample data and graphs provided by OpenResty XRay.

We start with an introduction to the system level memory usage breakdown for Nginx processes and then take a look at memory allocated and managed by various different allocators on the application level.

On The System Level

In modern operating systems, processes request and utilize virtual memory on

the highest level. The operating system manages the virtual memory for

each process. It maps those actually used virtual memory pages to physical memory

pages

backed by hardware (like DDR4 RAM sticks). It is important to note that a process

may request a lot of virtual memory space but may merely use a small portion

of it. For example, a process can always successfully claim, say, 2TB of virtual

memory from the operating system even though the system may only have 8GB of

RAM. This will go without any problems as long as that process does not write

to too many memory pages of this huge virtual memory space. It is this potentially

small portion of the virtual memory space that will actually get mapped to the

physical memory devices and thus is what we really care about. So never panic

when you see large virtual memory usage (usually named VIRT) in tools like

ps and top.

The small portion of the virtual memory which is actually used (meaning written

data to) is usually called RSS or resident memory. Well, when the system

is running out of physical memory and some part of the resident memory gets

swapped out to the disk1, then this swapped-out portion is no longer part of

the resident memory and becomes the “swapped memory” (or “swap” for short).

Many tools can provide the size of virtual memory, resident memory, and swapped

memory for any process (including OpenResty’s nginx worker processes). OpenResty

XRay can render a pretty pie graph like below

for an nginx worker process.

In this graph, the full pie represents the whole virtual memory space claimed

by the nginx worker process. The Resident Memory component indicates the resident

memory usage,

which is the memory pages actually used. And finally, the Swap component

that

is not shown here represents the swapped out portion.

As mentioned above, we usually care most about the Resident Memory component.

That is usually

the absolute focus of any memory usage optimizations. It is also quite alarming

if the Swap component shows up, which means the physical memory is no longer

enough

and the operating system may get overloaded by swapping memory pages in and

out. We should also pay attention to the unused portion of the virtual memory

space, however. It may be that we allocate some Nginx shared memory zones way

too big and they may someday bite us badly when they actually get filled up

with data (in which case they would become part of the Resident Memory component).

We will cover resident memory in more detail in another article. Next let’s take a look at memory usage on the application level.

On The Application Level

It is much more useful to inspect memory usage further on the application level. In particular, we care about how much memory currently used is allocated by the LuaJIT memory allocator, how much is used by the Nginx core and its modules, and how much by Nginx’s shared memory zones.

Consider the following pie graph generated by OpenResty XRay when analyzing an unmodified nginx worker process of an OpenResty application.

The Glibc Allocator

The Glibc Allocator component in the pie graph indicates the total memory

size

allocated by the Glibc library, which is the GNU implementation of the standard

C runtime library. This allocator is usually invoked on the C language level through

the function calls malloc(), realloc(), calloc(), and etc. This is usually

also known as the system allocator. Note that the Nginx core and its modules

also allocate memory via this system allocator as well (an important exception

to this is Nginx’s shared memory zones which we will discuss shortly below).

Some Lua libraries with C components or FFI

calls may sometimes directly invoke the system allocator, but it is more common for

them to utilize LuaJIT’s allocator. The OpenResty or Nginx build may

choose to use a different C runtime library than Glibc, like the musl libc.

We will extend our discussions on system allocators and Nginx’s allocator in

a dedicated article.

Nginx Shared Memory

The pie’s Nginx Shm Loaded component is for the total size of the actually used

portion

of the shared memory (or “shm”) zones allocated by the Nginx core or its modules.

Shared memory zones are allocated via the mmap() system call directly and

thus bypassing the standard C library’s allocator completely. Nginx shared memory

zones are shared among all its worker processes. Common examples are those defined

by the standard Nginx directives like ssl_session_cache,

proxy_cache_path,

limit_req_zone,

limit_conn_zone,

and upstream’s zone.

It also includes shared memory zones defined by Nginx’s 3rd-party modules like

OpenResty’s core component ngx_http_lua_module.

OpenResty applications usually define their own shared memory zones through

this module’s lua_shared_dict directive

in Nginx configuration files. We will cover the Nginx shm zones’ memory issues

in great detail in another dedicated article.

You can check out the following articles for more detailed discussions on OpenResty and Nginx’s shared memory zones:

- How OpenResty and Nginx Shared Memory Zones Consume RAM

- Memory Fragmentation in OpenResty and Nginx’s Shared Memory Zones

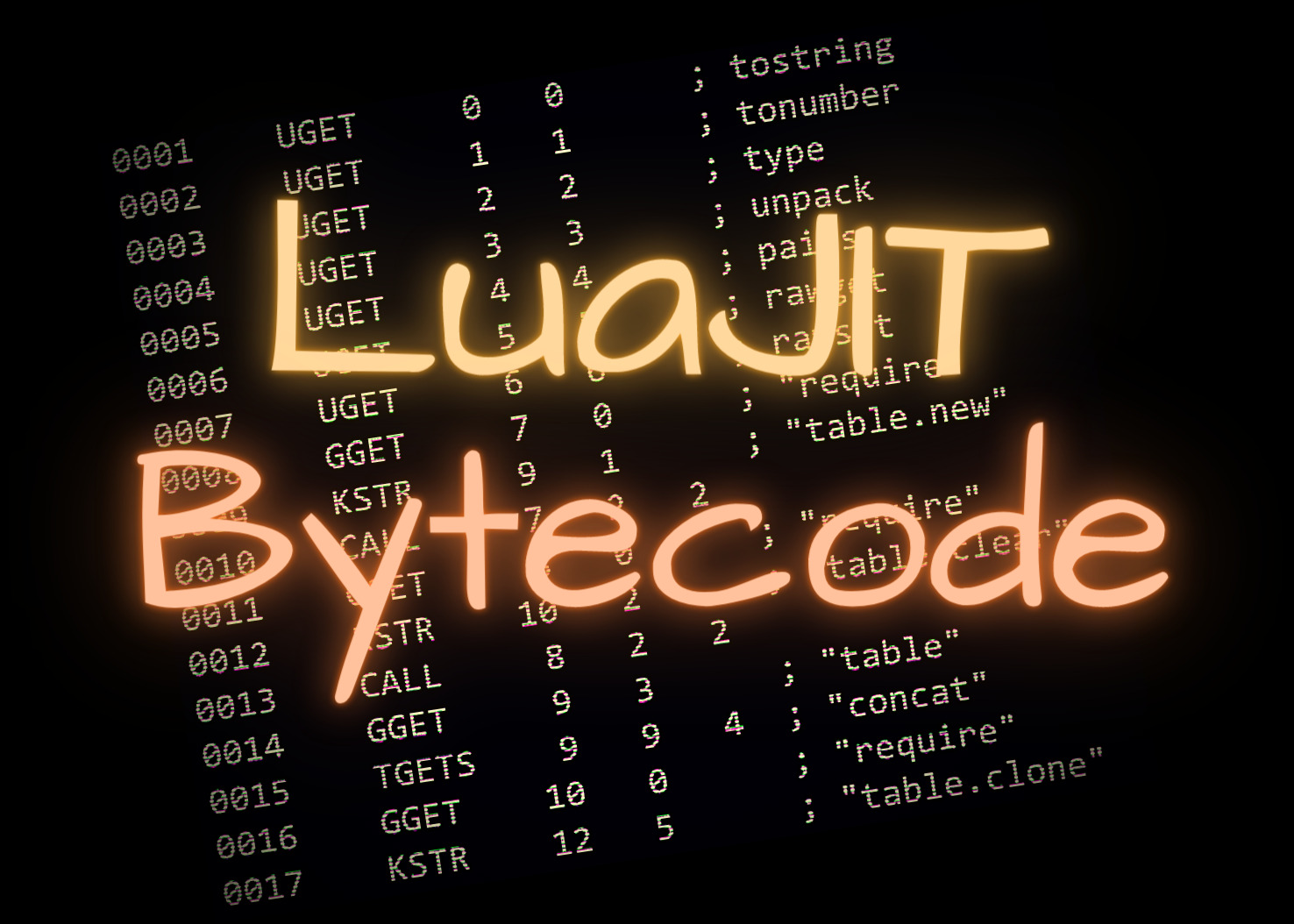

LuaJIT Allocator

The HTTP/Stream LuaJIT Allocator components in the pie represent the total memory size

allocated and

managed by LuaJIT’s builtin allocator, one for the LuaJIT virtual machine (VM) instance in the Nginx HTTP

subsystem and one

for the VM instance in the Nginx Stream subsystem. LuaJIT also has a build option2 to use the

system allocator but it is mostly just used with special testing and debugging tools only (like

with Valgrind and AddressSanitizer).

Lua strings, tables, functions, cdata objects, userdata objects, upvalues, and

etc, are all allocated by this allocator. On the other hand, primitive Lua values

like integers3, numbers, light userdata values, and booleans do not require any

dynamic allocations, however. C-level memory blocks allocated by LuaJIT’s ffi.new() calls

on the Lua land are also allocated by LuaJIT’s own allocator. All the memory

blocks allocated by this allocator are managed by LuaJIT’s garbage collector

(GC), and therefore it is not the user’s responsibility to free up those memory

blocks

when they are no longer needed4. Naturally, these memory objects are also called

“GC objects”. We will elaborate on this in another article.

Program Code Sections

The pie’s Text Segments component corresponds to the total size of the .text segments

of both the executable and the dynamically loaded libraries for the target process

mapped into the virtual memory space. The .text segments usually contain executable

machine code.

System Stacks

Finally, the System Stacks component in the pie refers to the total allocated size of

all

the system stacks (or “C stacks”) in the target process. Each operating system

(OS) thread has its own system stack. So multiple stacks only occur when multiple

operating system threads are used (note that OpenResty’s “light threads” created

by the ngx.thread.spawn

API function is completely different from such system-level threads). Nginx

worker processes usually have only one system thread unless its OS thread pools

are configured (via the aio threads configuration directive).

Other System Allocators

Some users may hook up 3rd-party memory allocators with their OpenResty or Nginx

processes. Common examples are tcmalloc

and jemalloc which speed up the system allocator (like

malloc). They help speed up small and naive malloc() calls in some Nginx

3rd-party modules, Lua C modules, or some C libraries (OpenSSL included!), but

they

are not very useful for the parts of the software which already use a good allocator

like Nginx’s memory pools and LuaJIT’s builtin allocator. Use of such “add-on”

allocator libraries introduce new complexity and problems which we

will cover in great detail in a future post.

Used or Not Used

The application-level memory usage breakdown we just introduced is a little

bit complicated when we analyze whether it is for used virtual memory pages

or unused ones. Only the Nginx Shm Loaded component in the pie graph is for

the virtual memory pages actually used. Other components include both kinds.

Fortunately memory allocated by the Glibc allocator and LuaJIT allocator are

usually used anyway. It does not make any big differences most of the time.

For Traditional Nginx Servers

Traditional Nginx servers are just a strict subset of OpenResty applications. These users still see system allocator memory and Nginx shared memory zone usage, among other things. OpenResty XRay can still be used to directly inspect and analyze such server processes, even in production. You will not see any Lua related stuff if you have not compiled OpenResty’s Lua modules into your Nginx build, of course.

Conclusion

This article is a first in a series of posts which explain how OpenResty and Nginx allocate and manage memory in the hope of optimize memory usage of those applications based on them. This post gives an overview of how memory is used and allocated on the highest level. In subsequent articles in the same series, we will have a closer look at each allocator and memory manage facility in great detail in each dedicated article. Stay tuned!

Further Readings

- How OpenResty and Nginx Shared Memory Zones Consume RAM

- Memory Fragmentation in OpenResty and Nginx’s Shared Memory Zones.

About The Author

Yichun Zhang (Github handle: agentzh), is the original creator of the OpenResty® open-source project and the CEO of OpenResty Inc..

Yichun is one of the earliest advocates and leaders of “open-source technology”. He worked at many internationally renowned tech companies, such as Cloudflare, Yahoo!. He is a pioneer of “edge computing”, “dynamic tracing” and “machine coding”, with over 22 years of programming and 16 years of open source experience. Yichun is well-known in the open-source space as the project leader of OpenResty®, adopted by more than 40 million global website domains.

OpenResty Inc., the enterprise software start-up founded by Yichun in 2017, has customers from some of the biggest companies in the world. Its flagship product, OpenResty XRay, is a non-invasive profiling and troubleshooting tool that significantly enhances and utilizes dynamic tracing technology. And its OpenResty Edge product is a powerful distributed traffic management and private CDN software product.

As an avid open-source contributor, Yichun has contributed more than a million lines of code to numerous open-source projects, including Linux kernel, Nginx, LuaJIT, GDB, SystemTap, LLVM, Perl, etc. He has also authored more than 60 open-source software libraries.

Translations

We provide a Chinese translation for this article on blog.openresty.com ourselves. We also welcome interested readers to contribute translations in other natural languages as long as the full article is translated without any omissions. We thank them in advance.

We are hiring

We always welcome talented and enthusiastic engineers to join our team at OpenResty Inc.

to explore various open source software internals and build powerful analyzers and

visualizers for real world applications built atop the open source software. If you are

interested, please send your resume to talents@openresty.com . Thank you!

Morden Android operating systems do support swapping memory pages out to memory, but with those pages compressed, still saving physical memory space. ↩︎

This build option is

-DLUAJIT_USE_SYSMALLOC. But never use it in production! ↩︎By default the LuaJIT runtime only uses a single number representation for both integers and numbers, which is the double-precision floating-point numbers. But still the user can pass the build option

-DLUAJIT_NUMMODE=2to enable the additional 32-bit integer representation at the same time. ↩︎But it is still our responsibility to make sure all references to those useless objects are properly removed. ↩︎