Limit Request Rate by Custom Keys in OpenResty Edge

Today I’d demonstrate another feature in OpenResty Edge: to limit the request rate by some keys.

Sometimes the clients send requests too fast, like under a denial of service attack. In such cases, we should limit the request rate to protect both the gateway servers and origin servers. Otherwise the servers may get overloaded.

Add request rate limiting page rule for the sample application

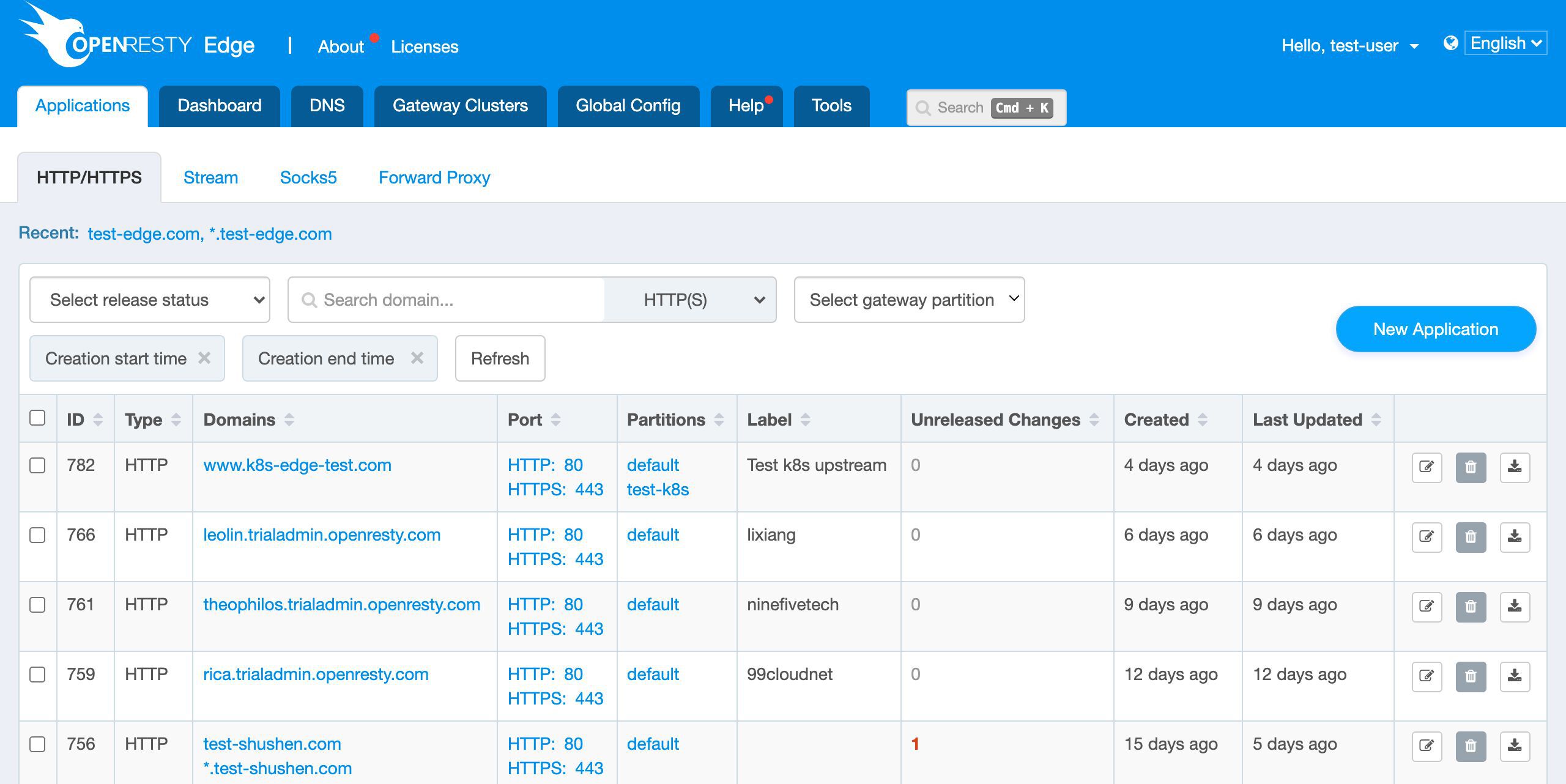

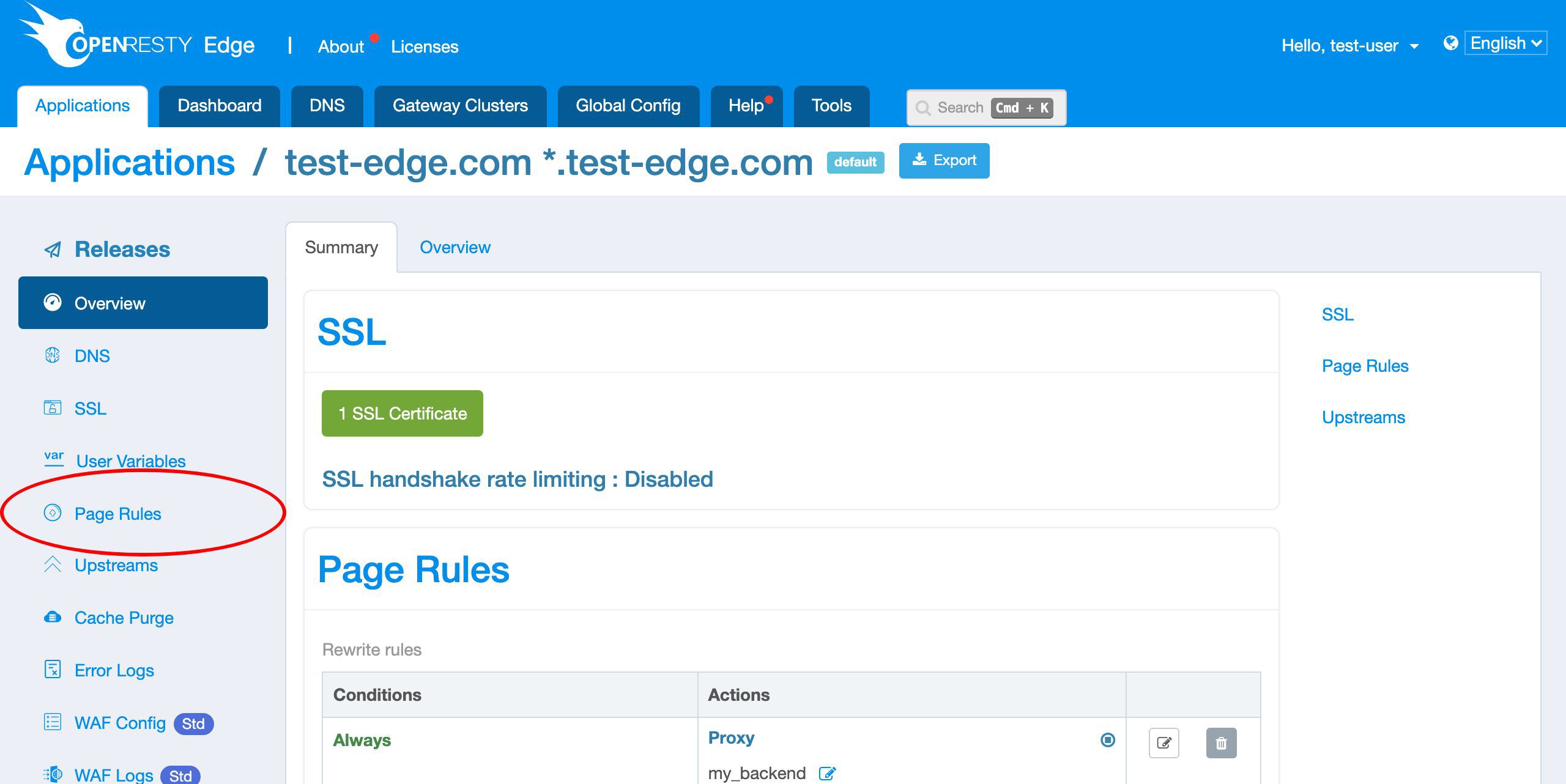

Let’s go to a web console of OpenResty Edge. This is our sample deployment of the console. Every user would have their own deployment.

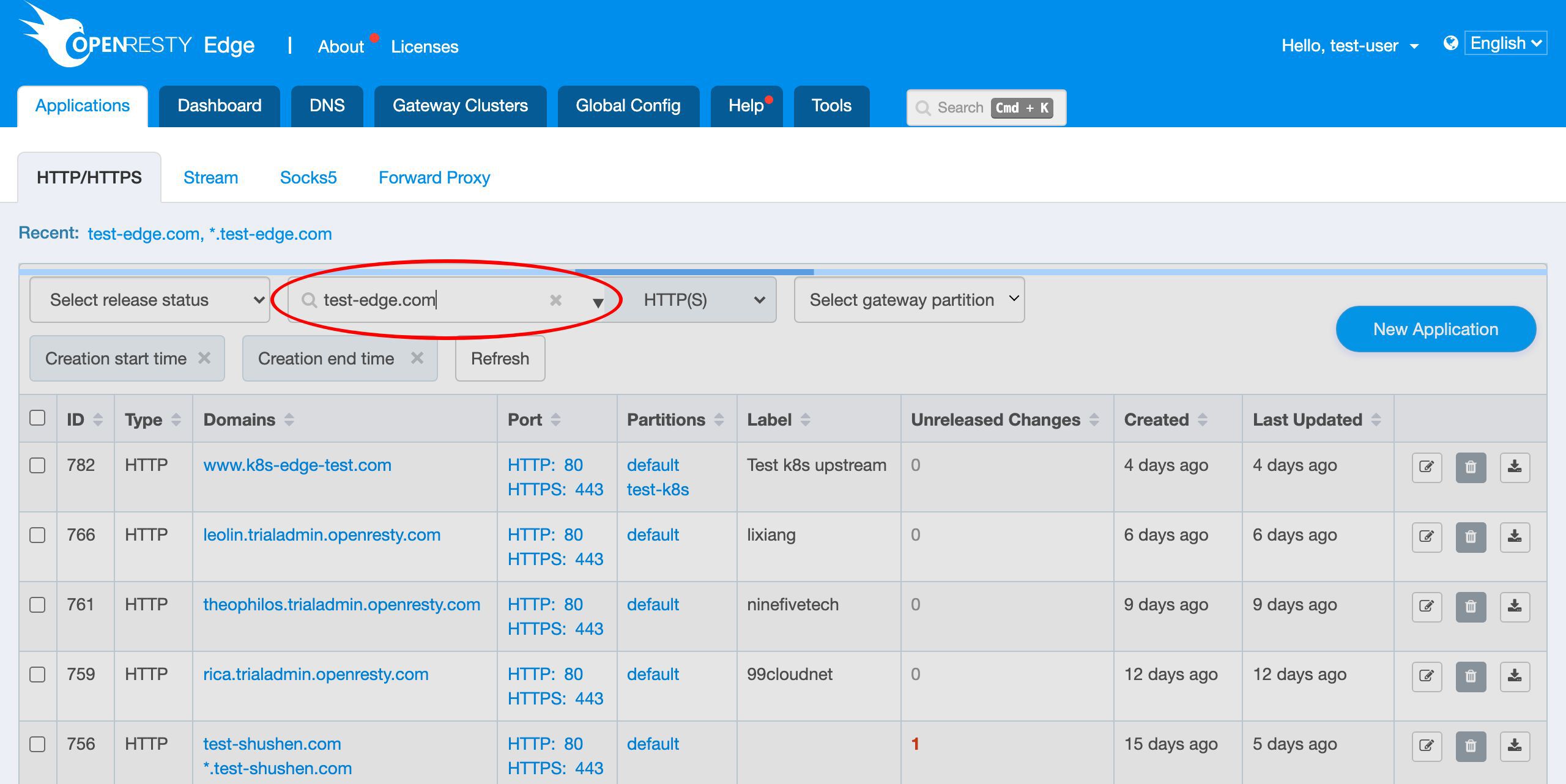

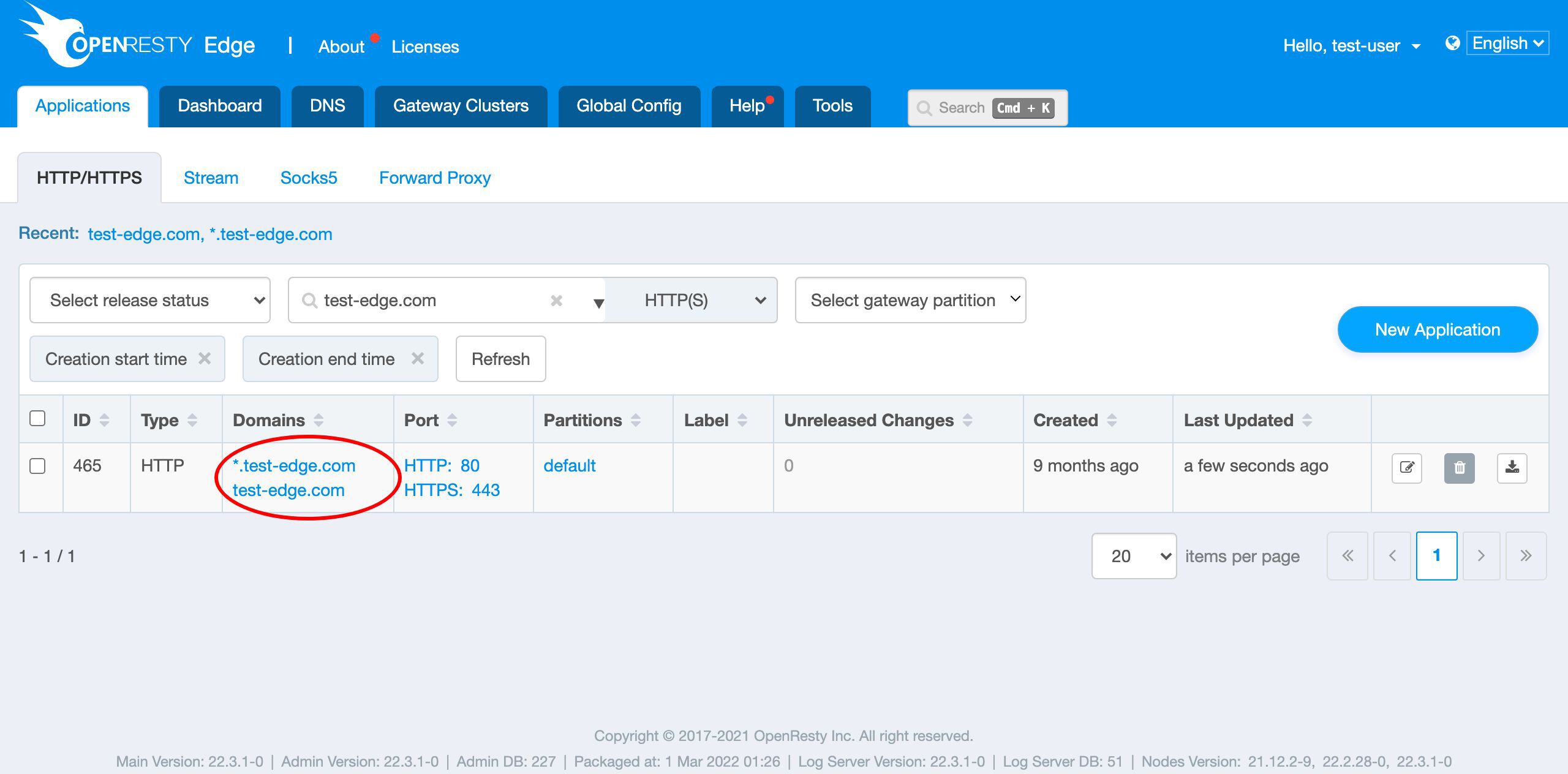

We can still use our continuing sample application, test-edge.com.

Enter the application.

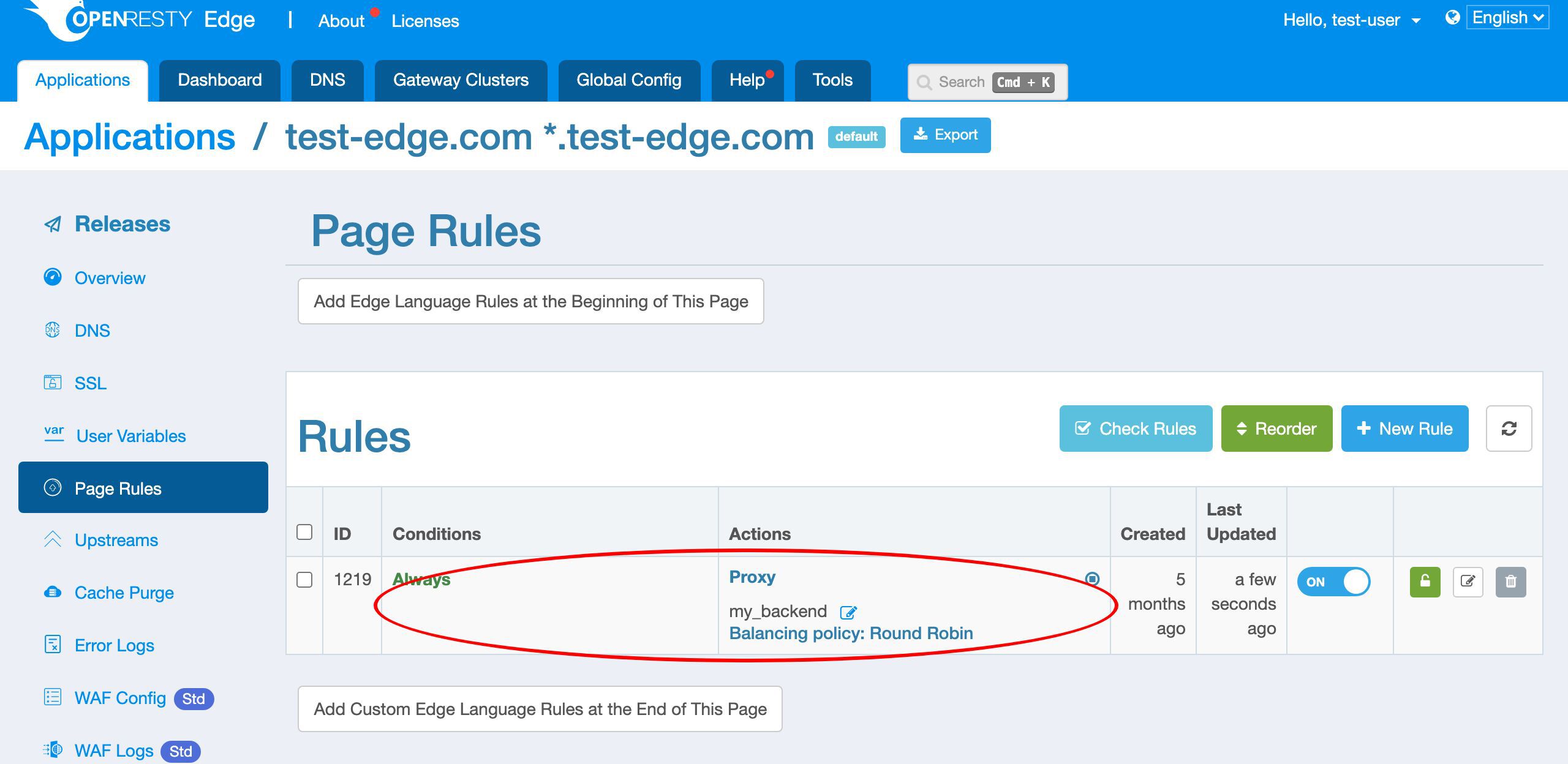

We already have a page rule defined.

This page rule sets up a reverse proxy to a pre-defined upstream. And there’s no request rate limiting defined yet.

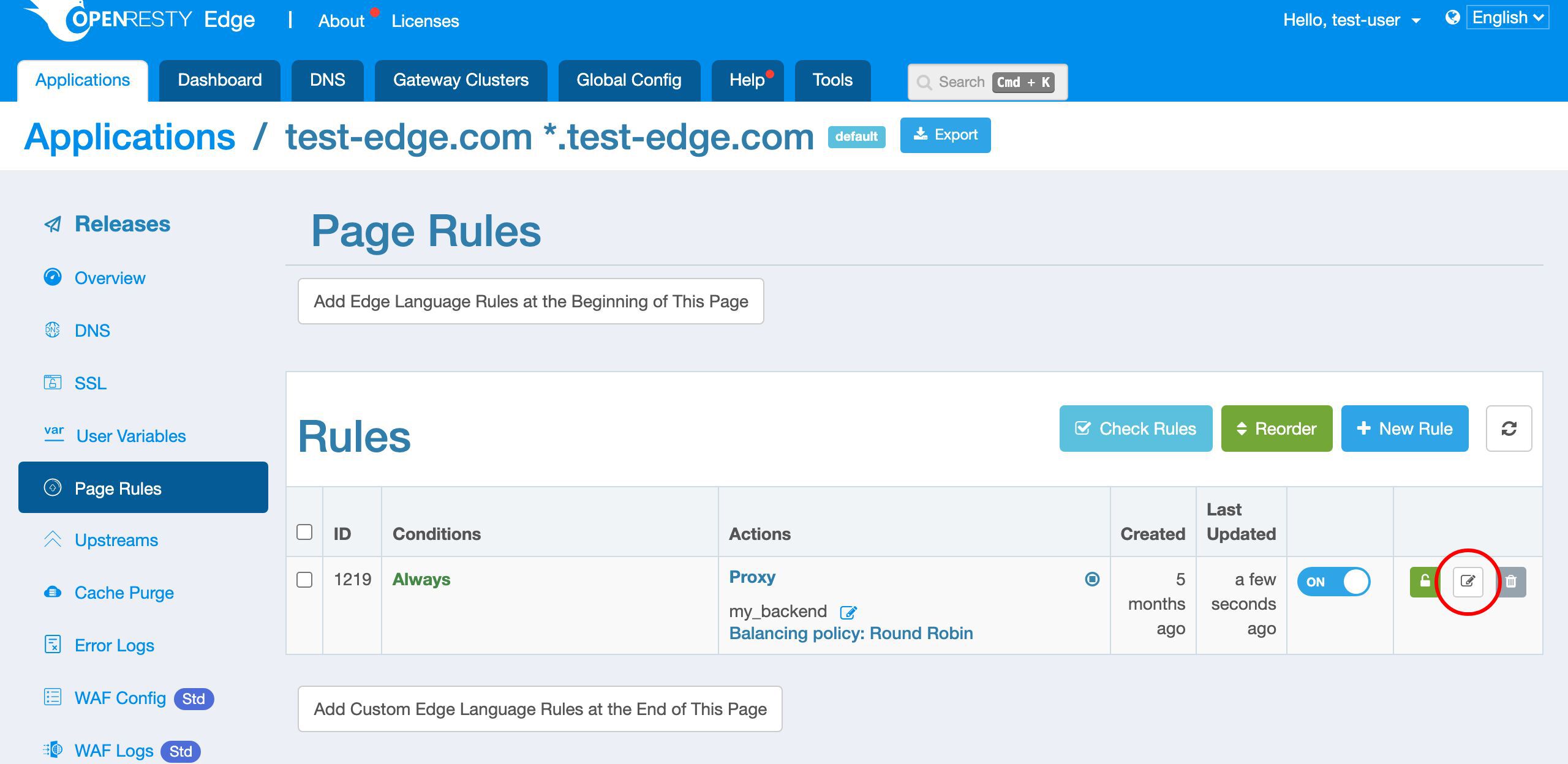

Now let’s edit the existing page rule to add rate limiting.

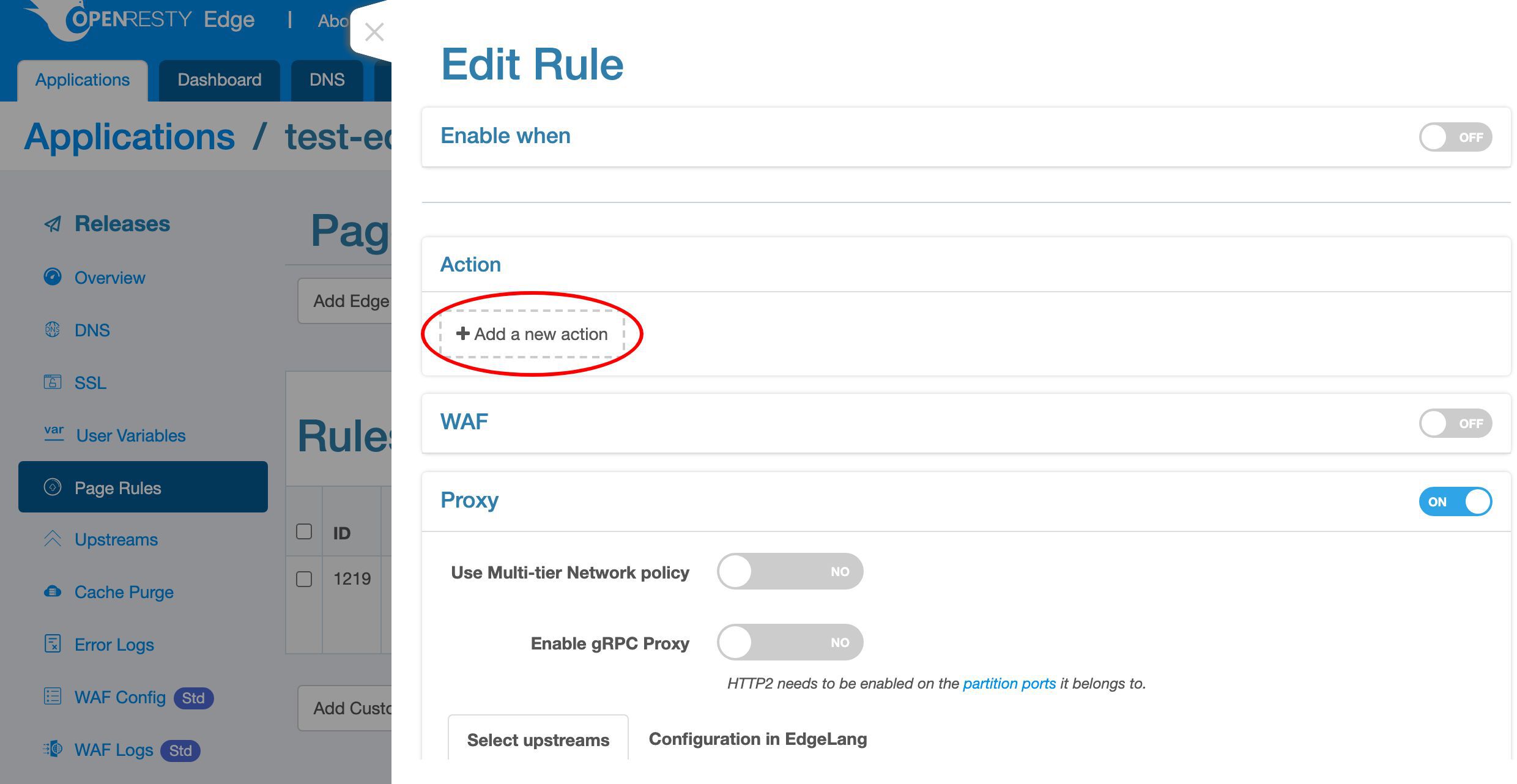

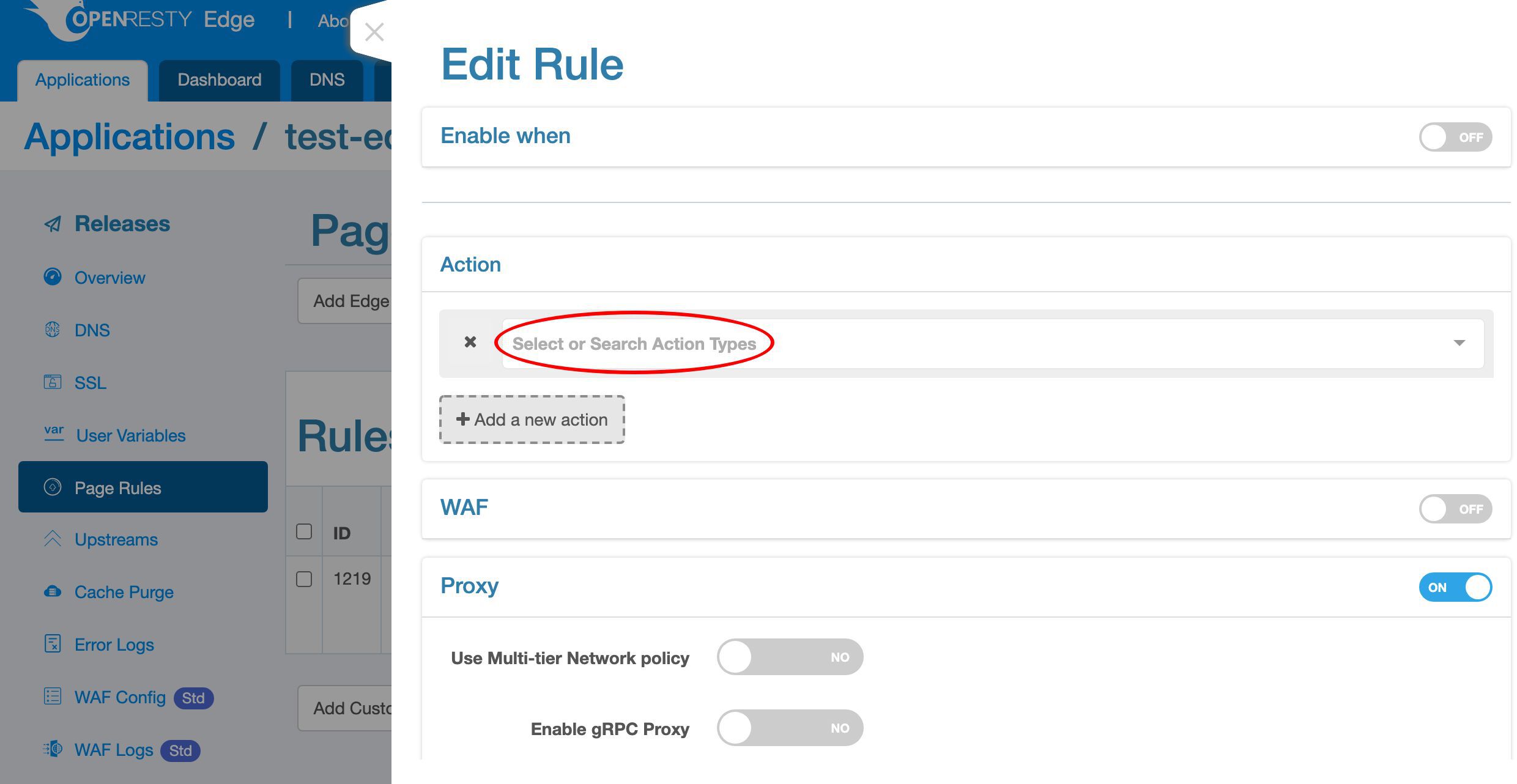

Add a new action.

You can search for the action you want to add here

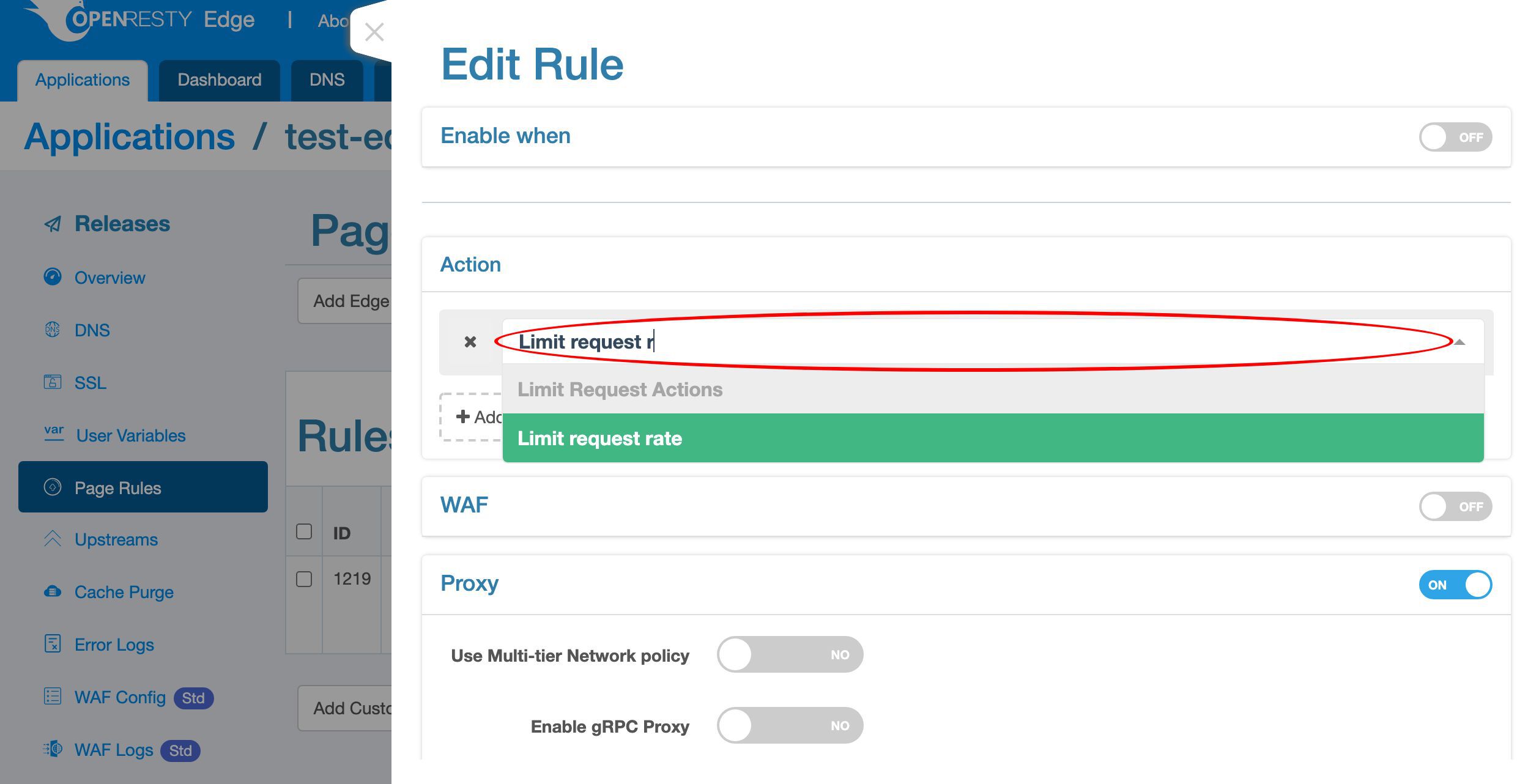

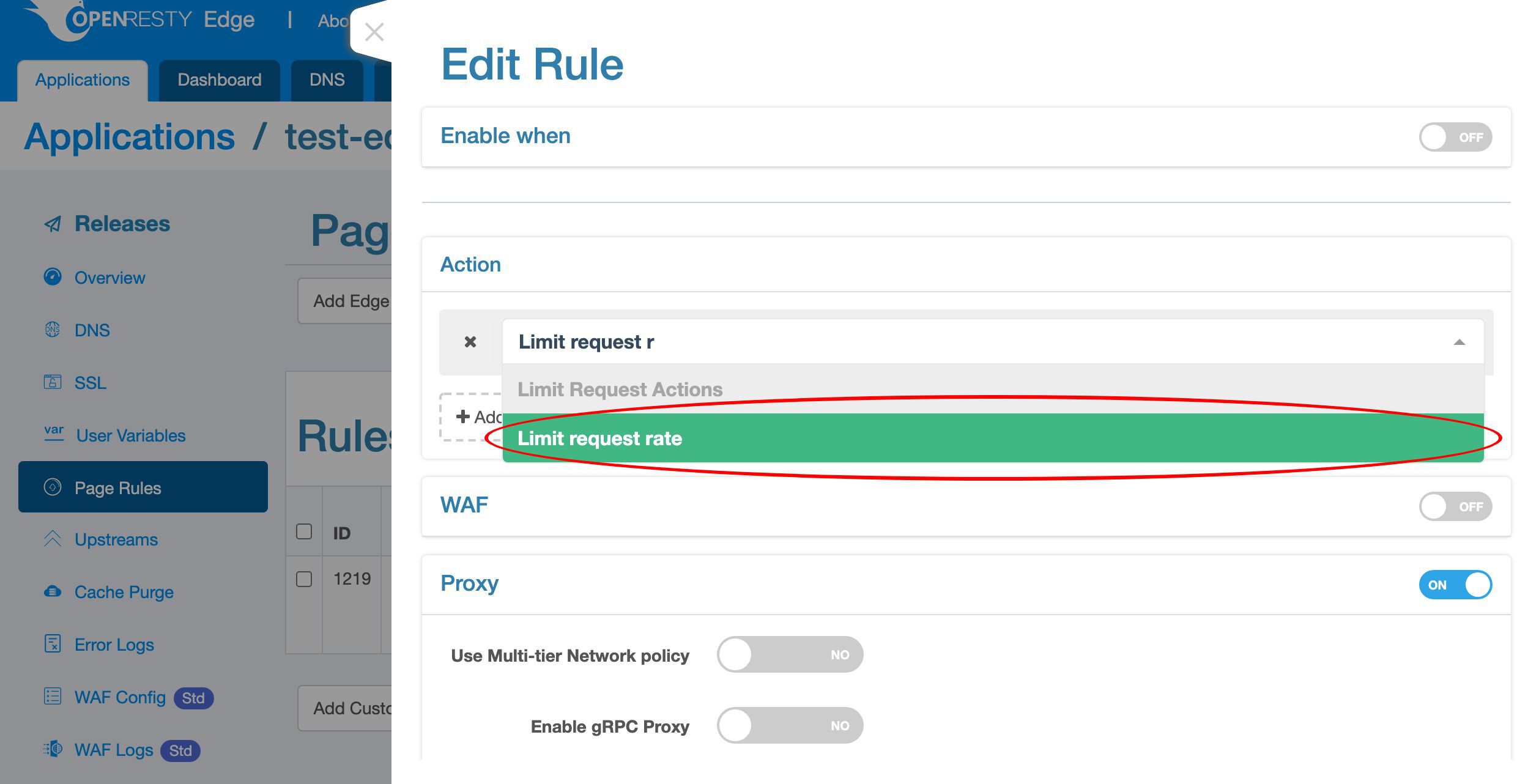

Search for “Limit request rate”.

Select it.

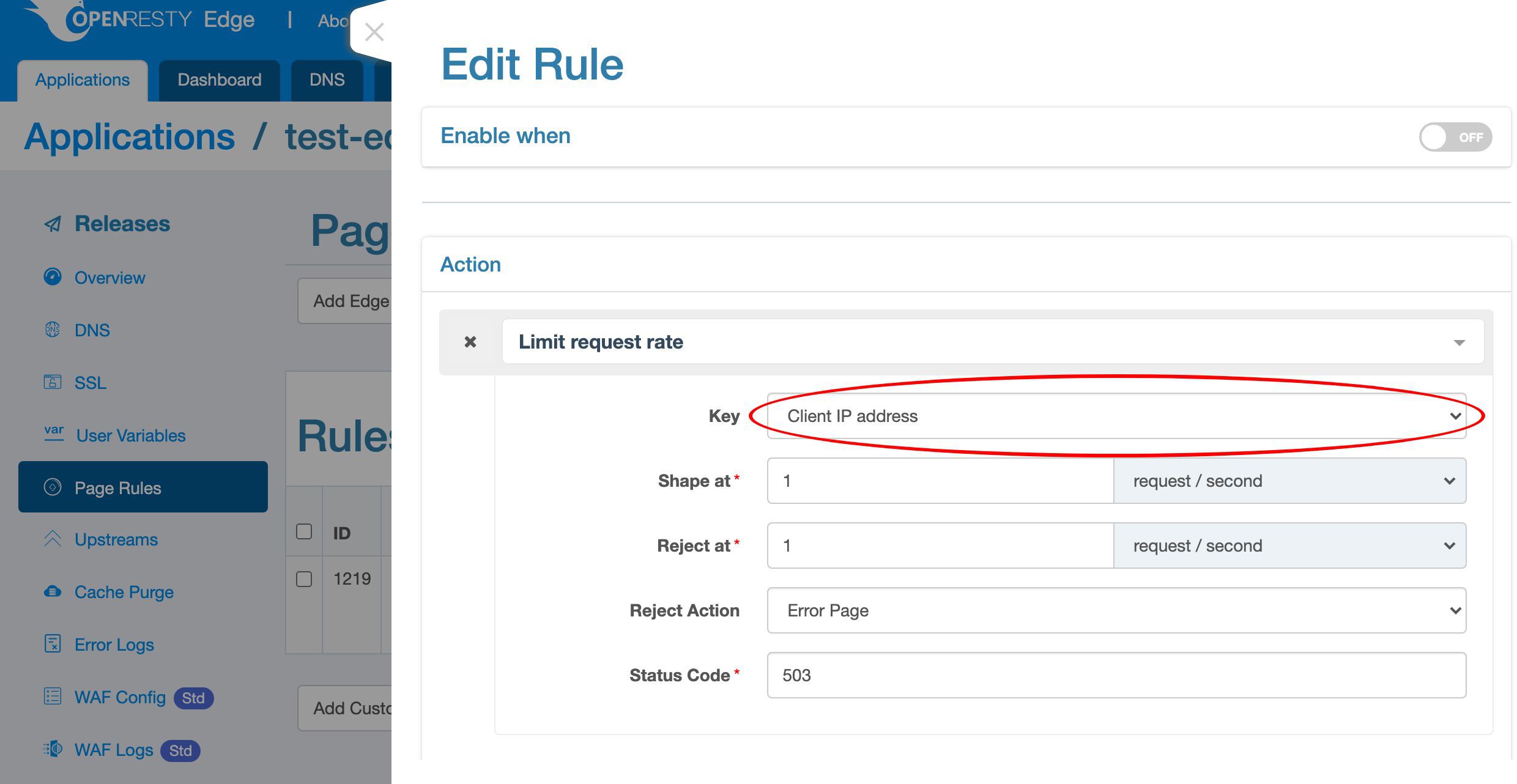

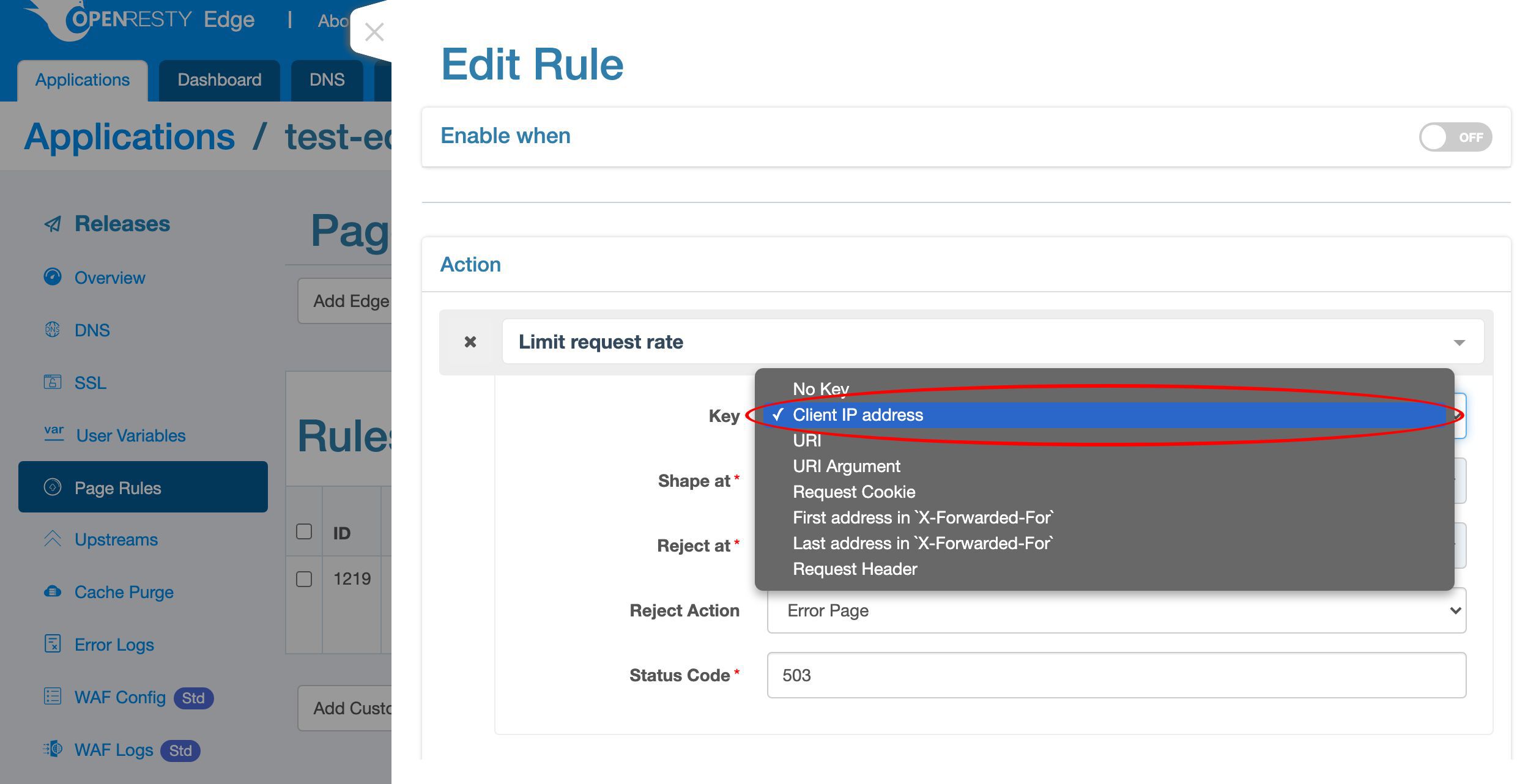

First we need to specify the keys for rate limiting.

We can see that there are a lot of possible key types. Like the client IP address, the URI, the URI argument, cookie, and many more.

Choose the default key type, client IP address. Then the limit is for the scope of each unique client IP address.

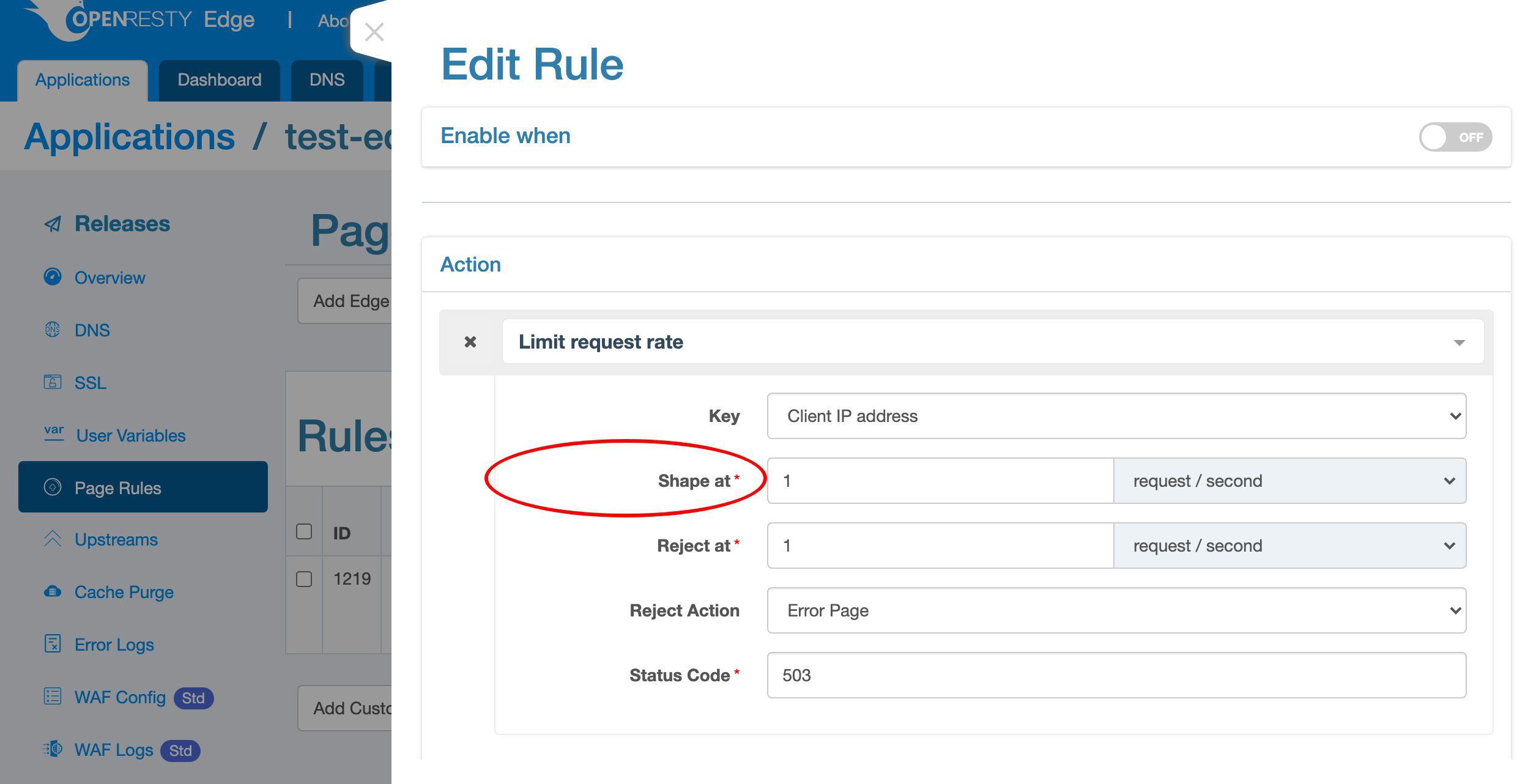

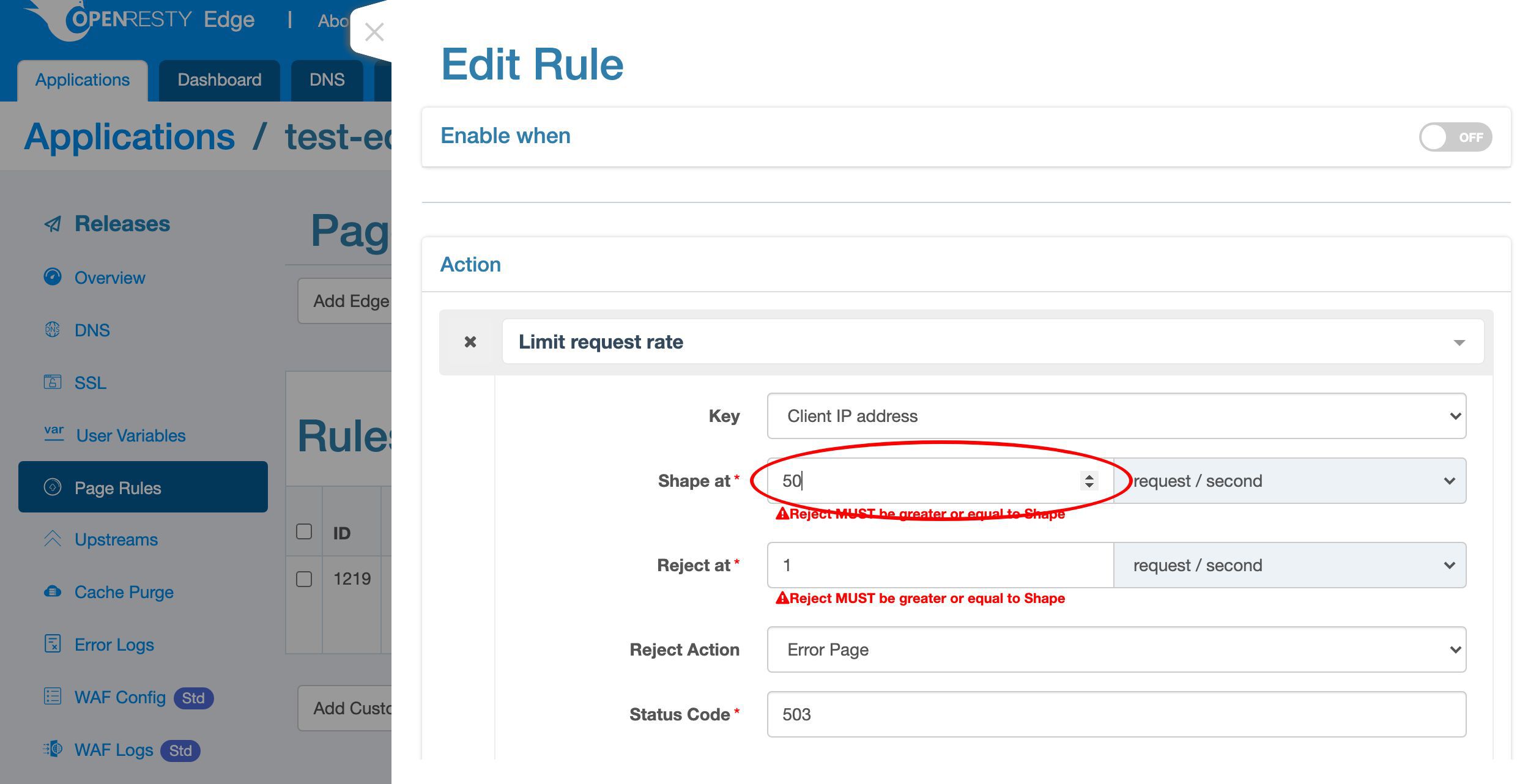

The “Shape at” rate is a soft limit. When a client tries to send requests faster than this rate, the gateway server will delay those excessive requests to match this rate. So the faster the client sends requests, the longer delay the gateway will add to the requests.

Here we specify a rate of 50 requests per second.

Because we specified the key type to be client IP addresses, the limit will be applied to individual client IP addresses.

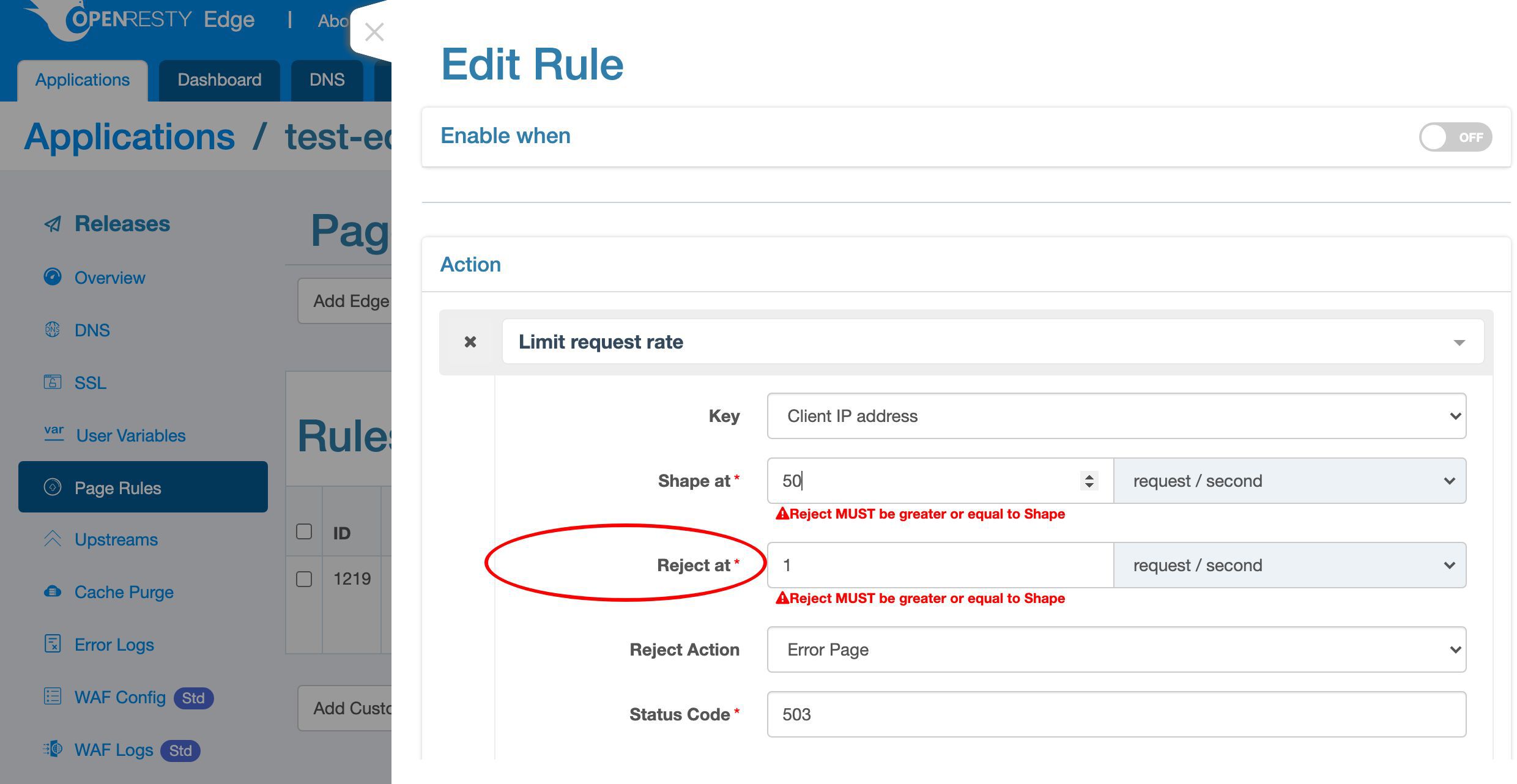

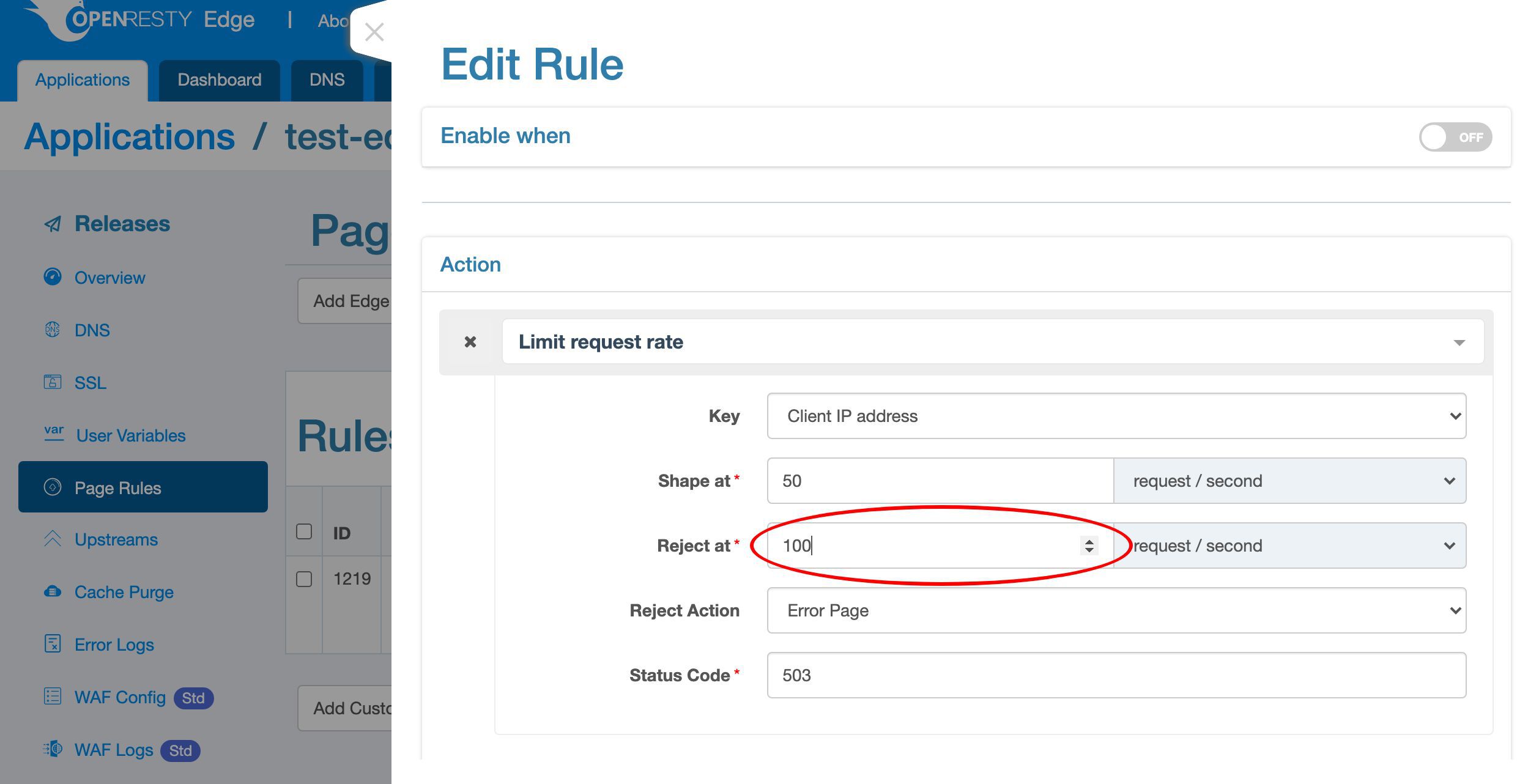

The “Reject at” rate is a hard limit. When the client sends the requests so fast that exceeds this hard limit, we can take more aggresive actions like blocking them immediately without waiting.

Specify a rate of 100 requests per second here.

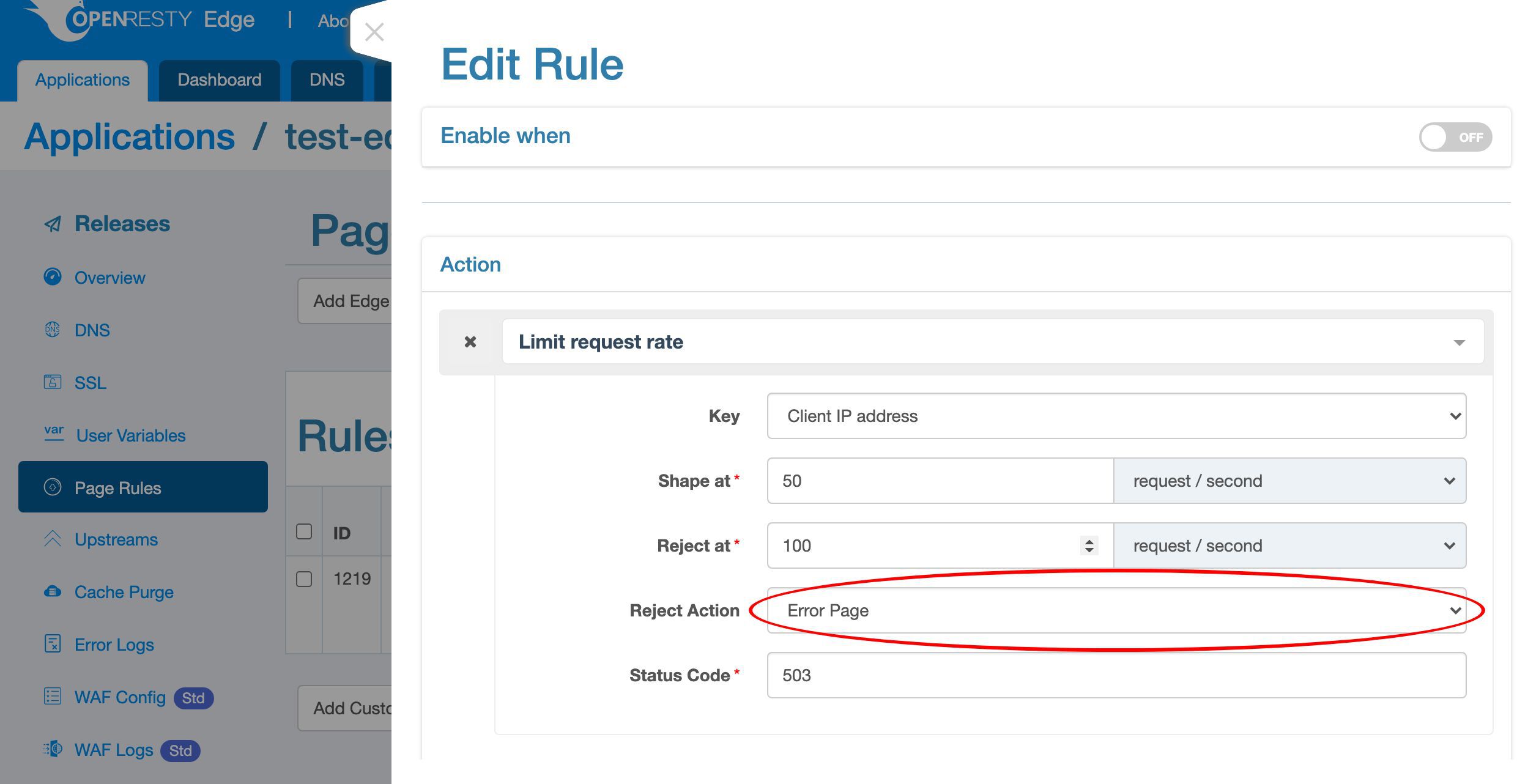

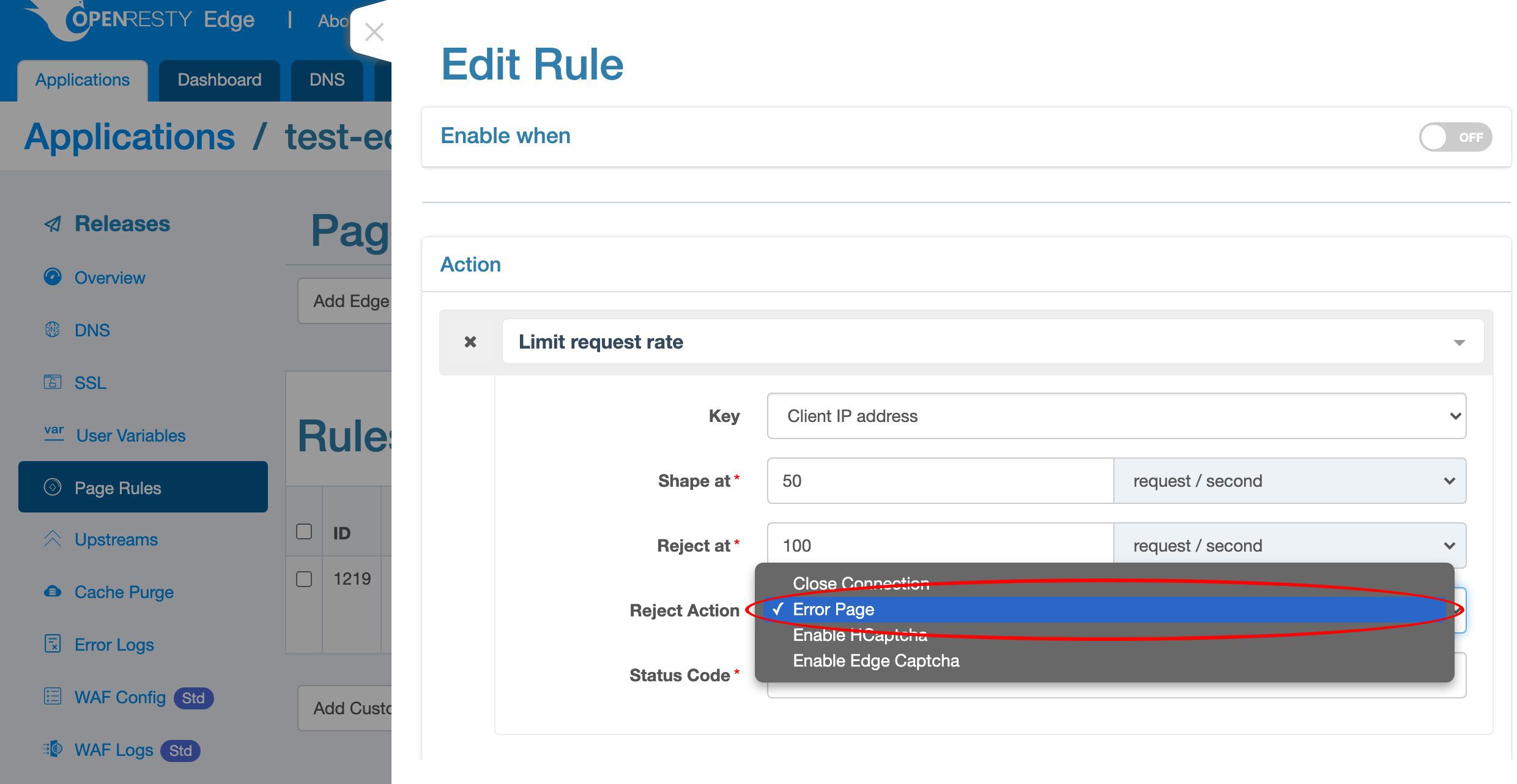

We can choose from different interception actions.

Like closing the current connection right away, returning an error page, and returning a captcha page to block bots.

Select the default “Error Page” action, which will return an error page via HTTP.

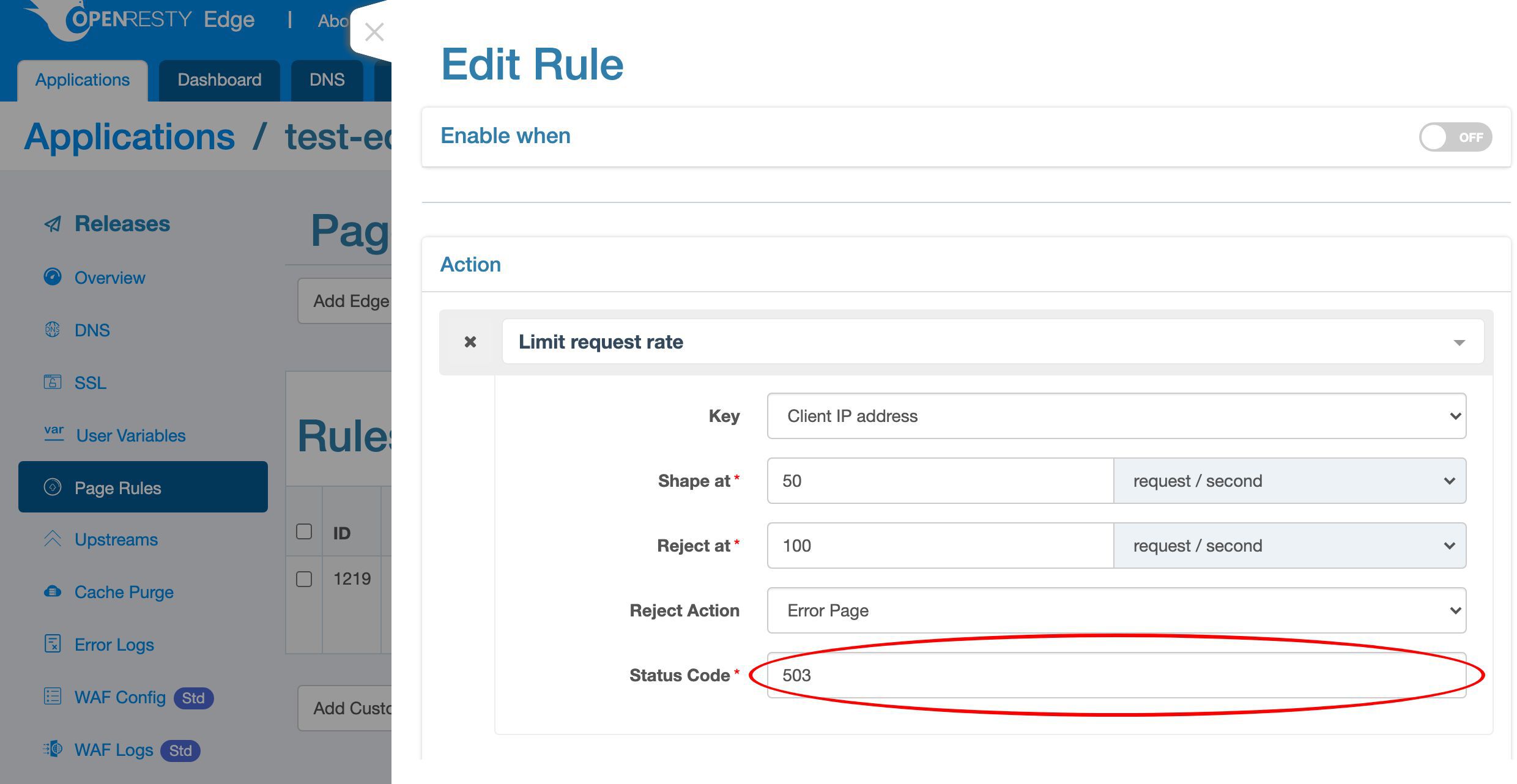

We use the default HTTP status code, 503, to mean service unavailable.

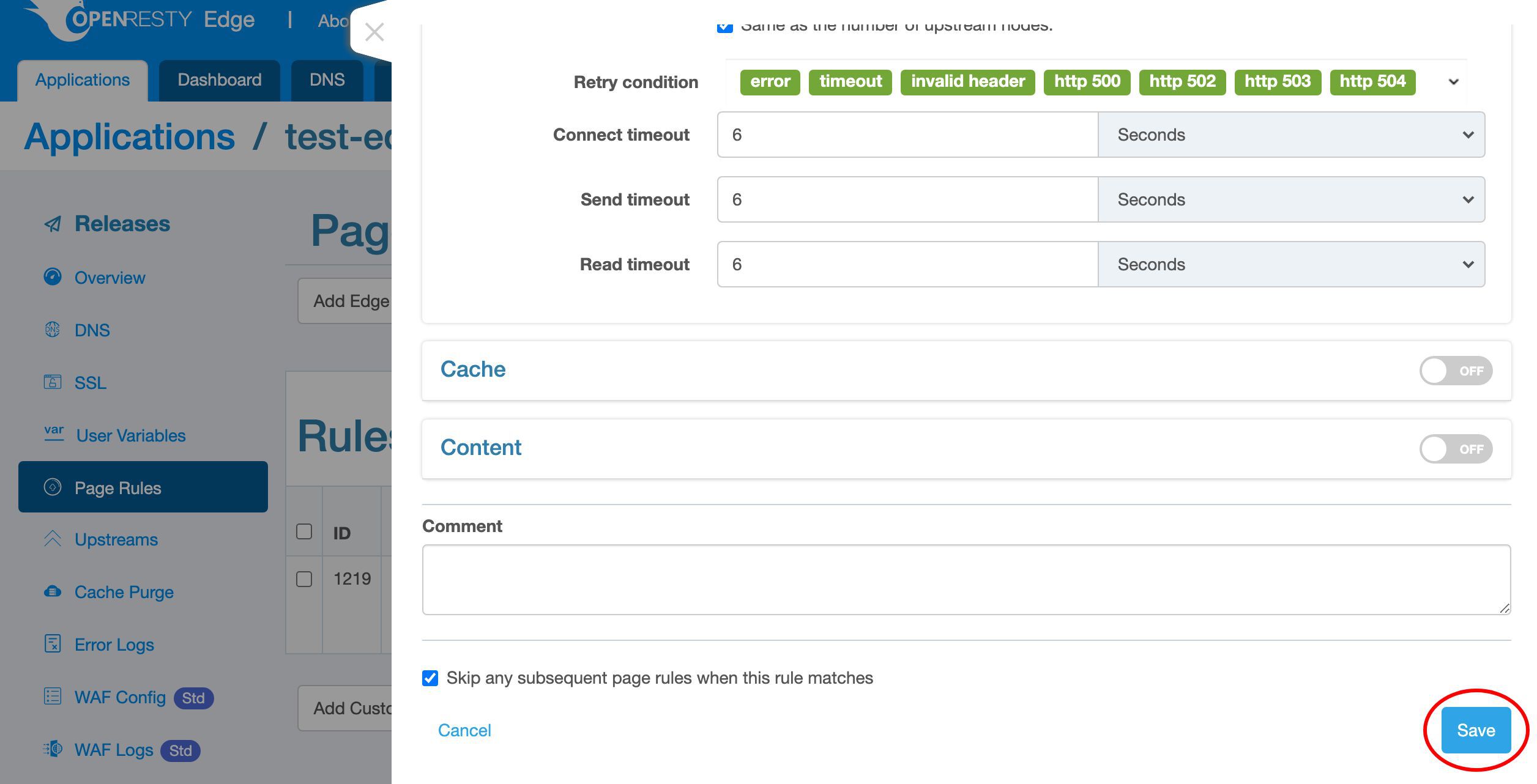

Save our changes to to this page rule.

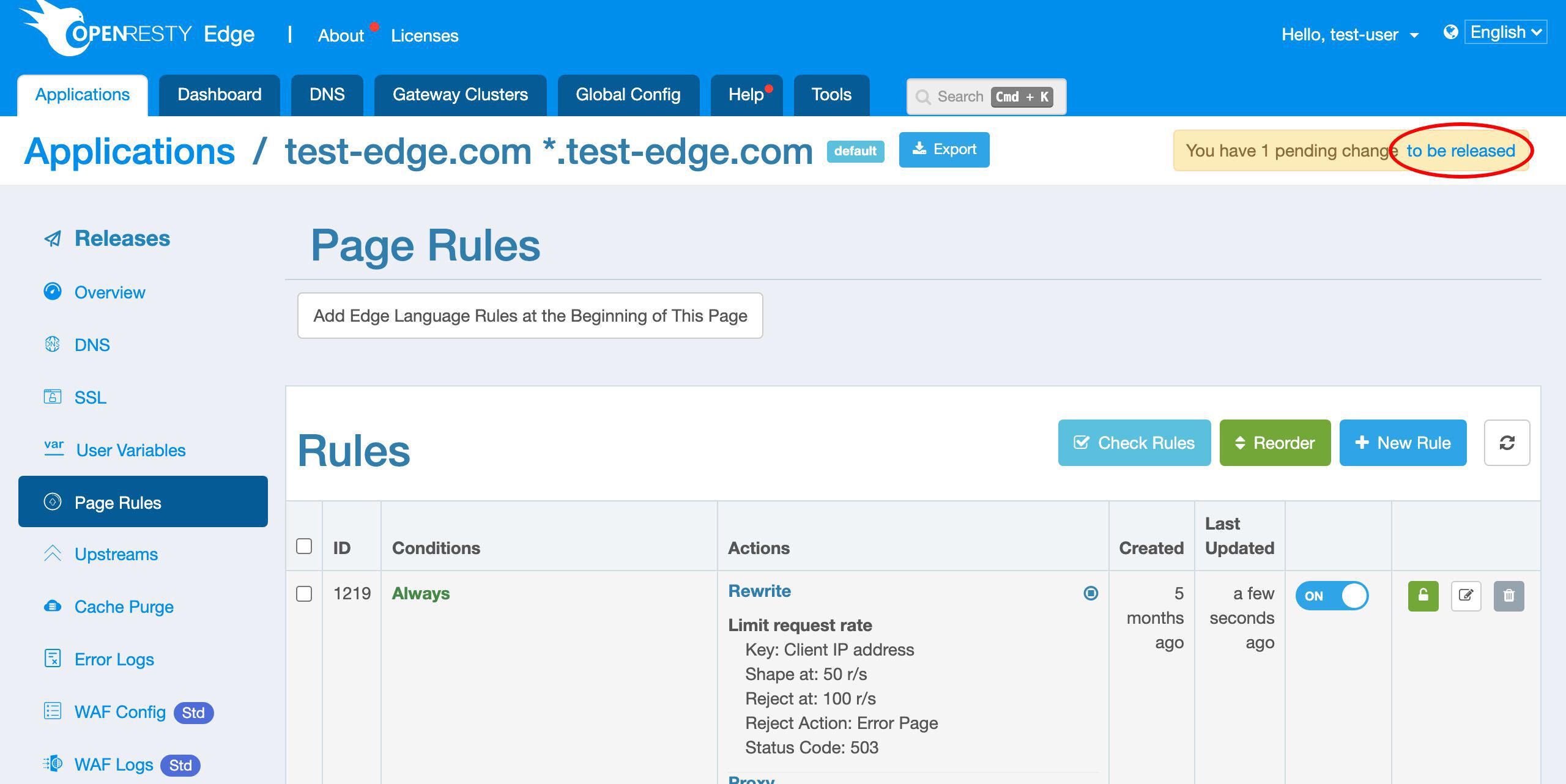

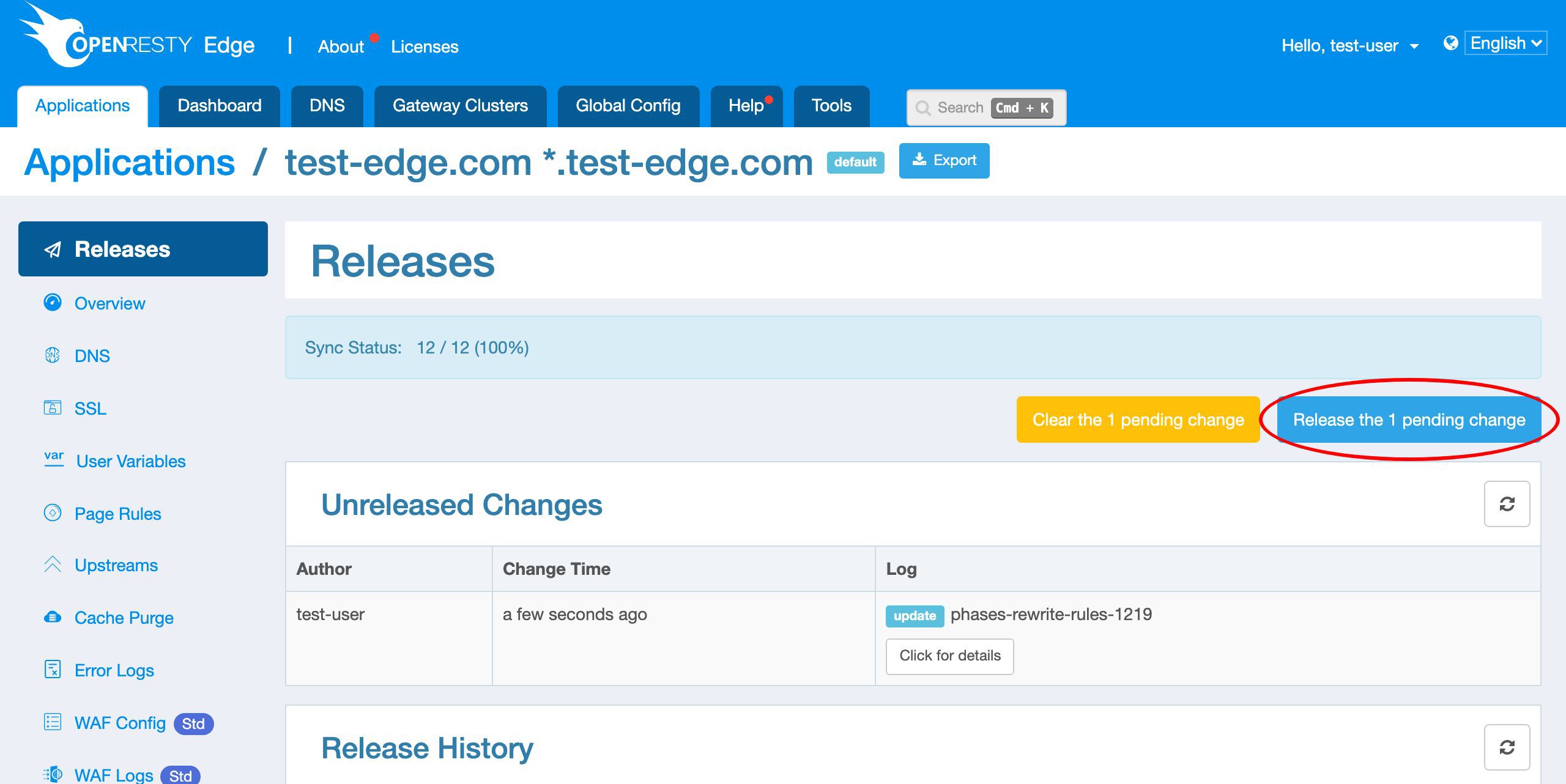

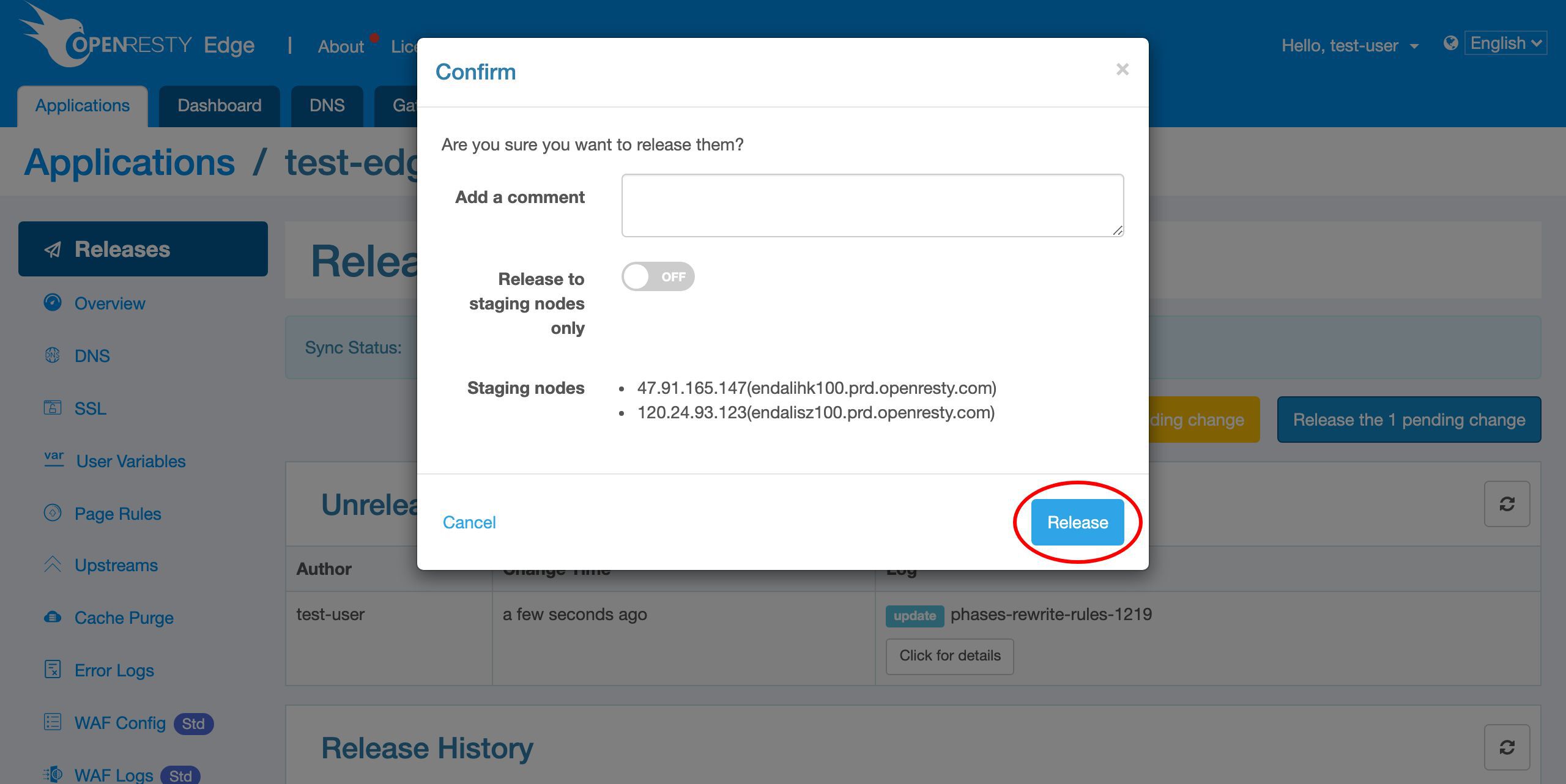

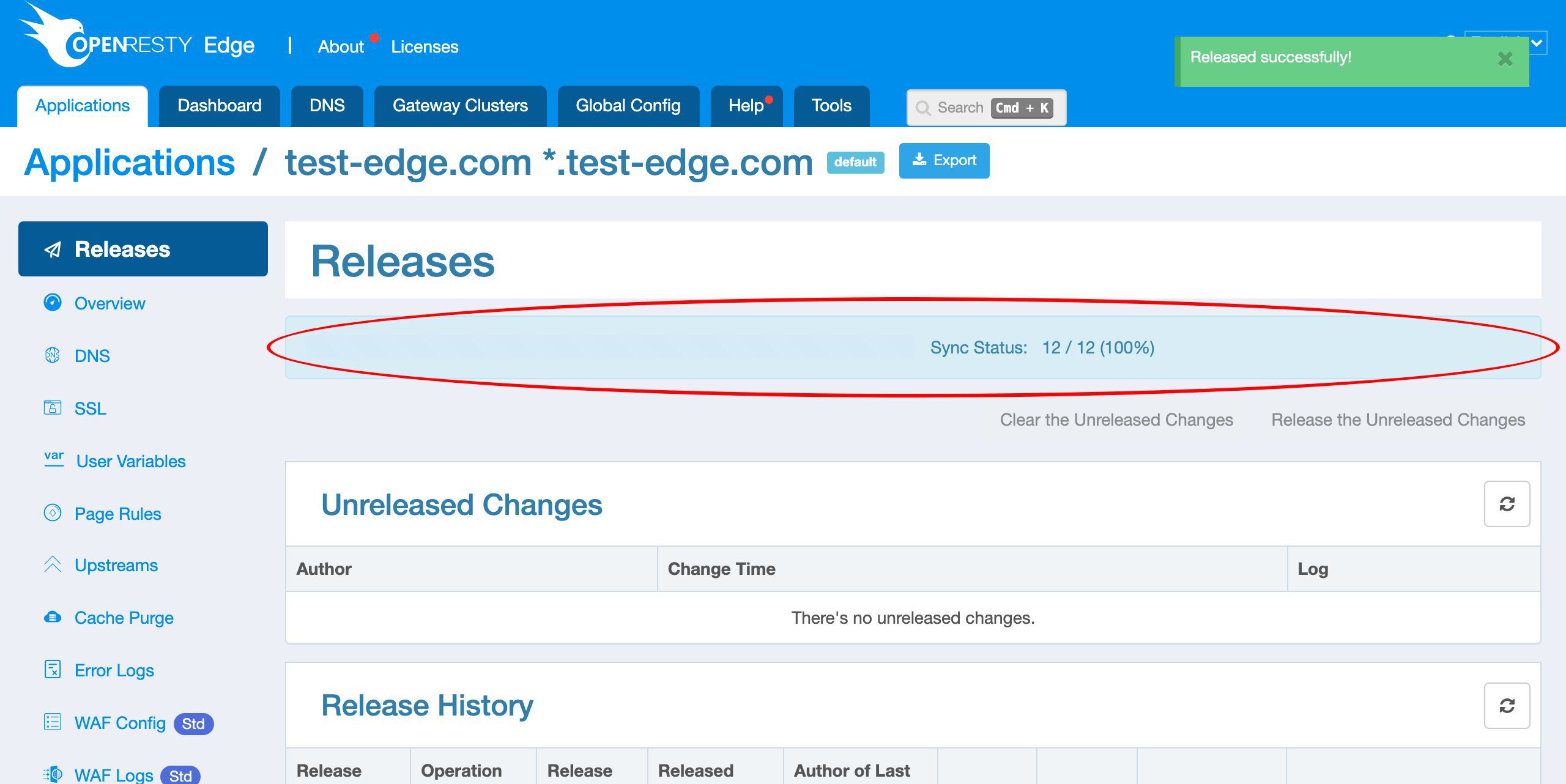

We need to make a new release to push out our new changes, as always.

Click on this button.

Ship it!

Our new release is now synchronized to all our gateway servers.

Now the new page rule has been pushed to all the gateway clusters and servers.

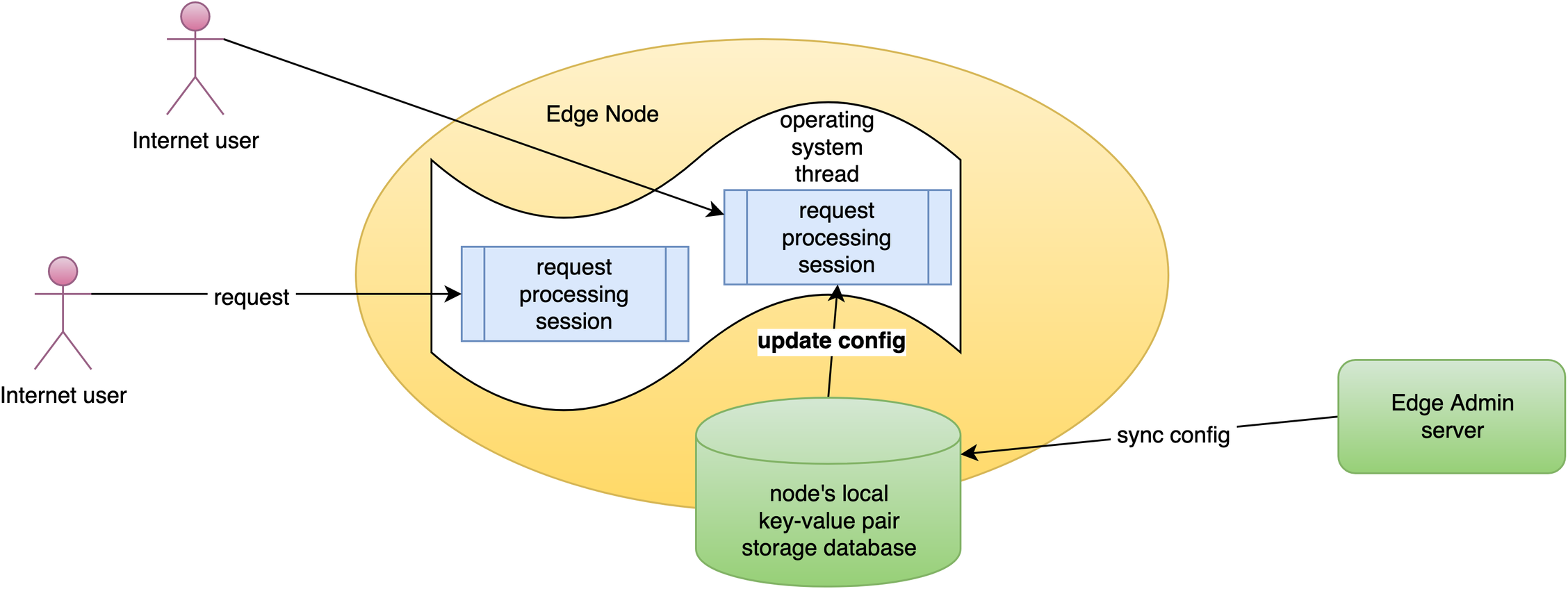

Our configuration changes do NOT require server reload, restart, or binary upgrade. So it’s very efficient and scalable.

Test

Next, we’ll verify the effect of the new rate limits.

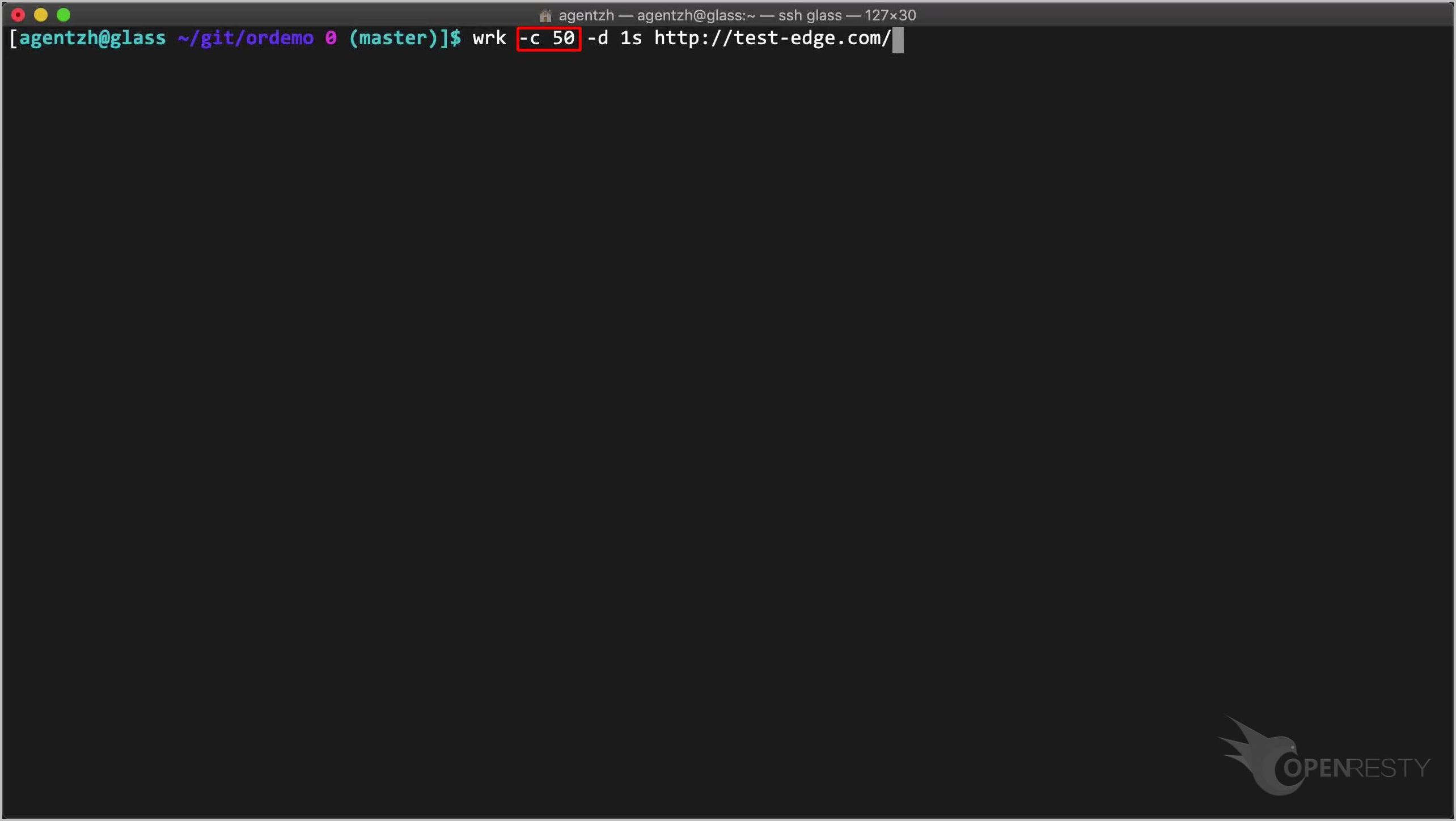

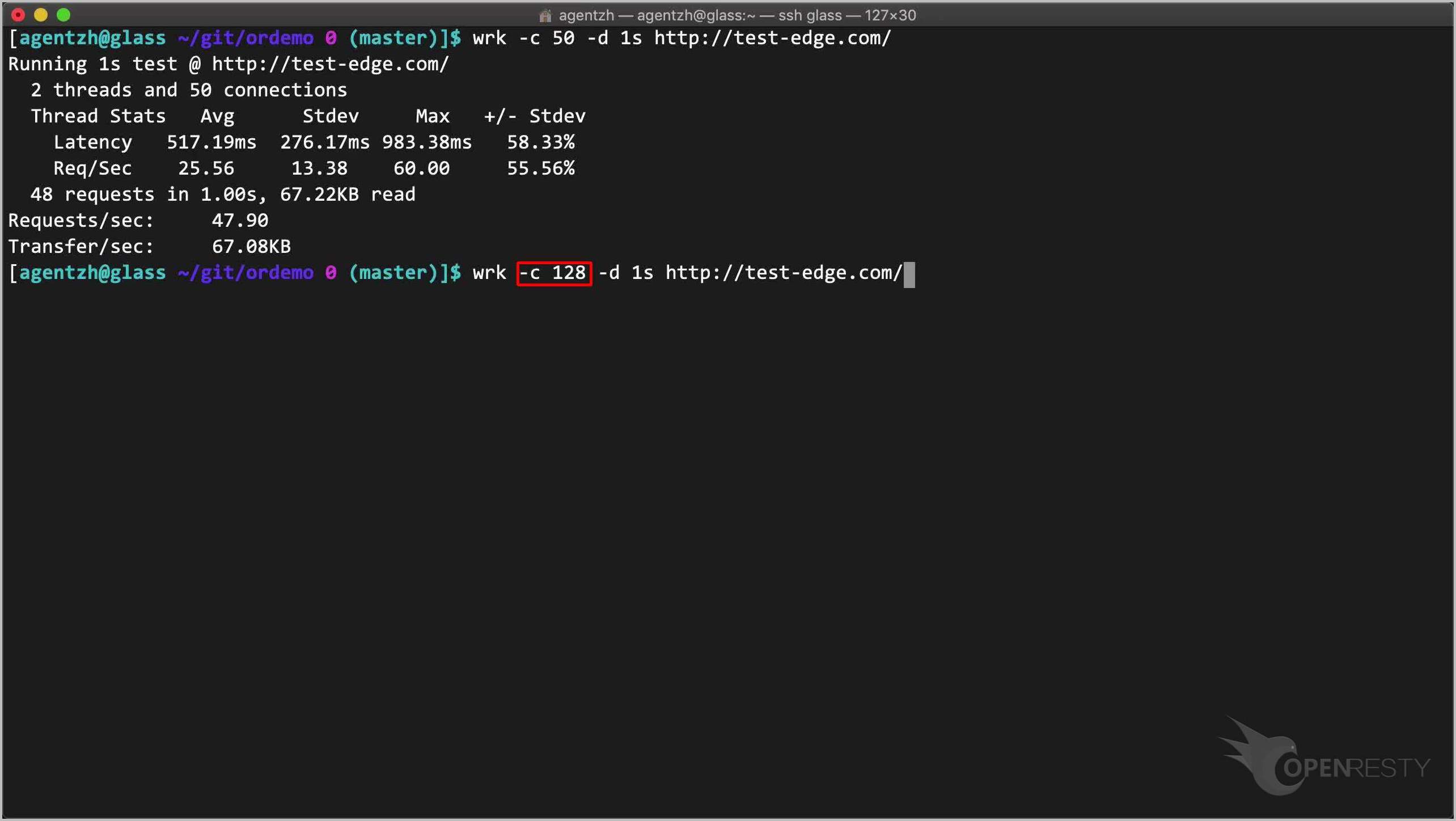

On the terminal, we can send a lot of requests very fast through the open-source utility named wrk.

wrk -c 50 -d 1s http://test-edge.com/

Here we use a concurrency level of 50 first. Note the -c option.

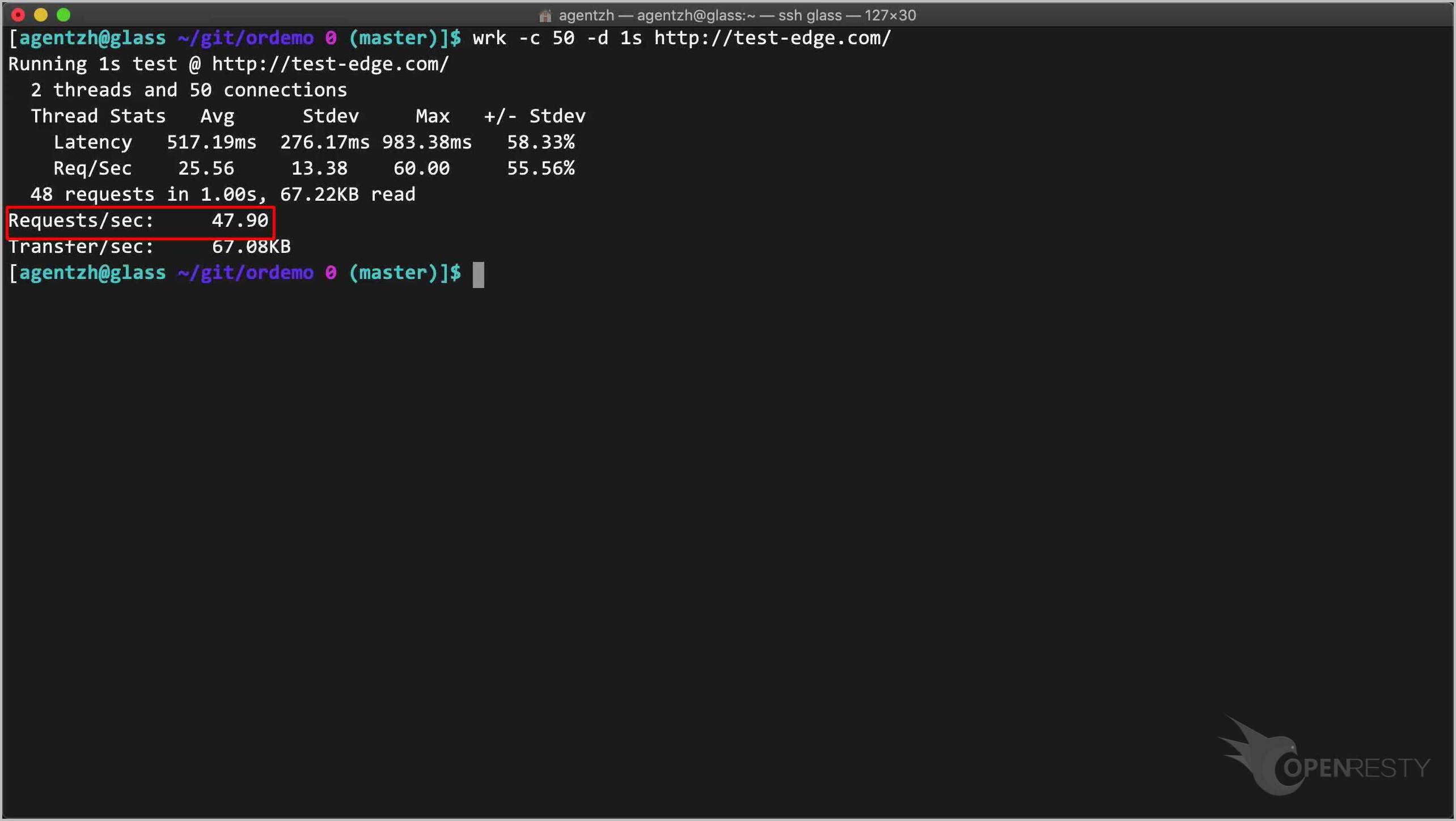

Run the command. The actual request rate is about 50 requests per second. This is our “Shape at” rate.

Now we increase the concurrency level to make wrk send requests much faster.

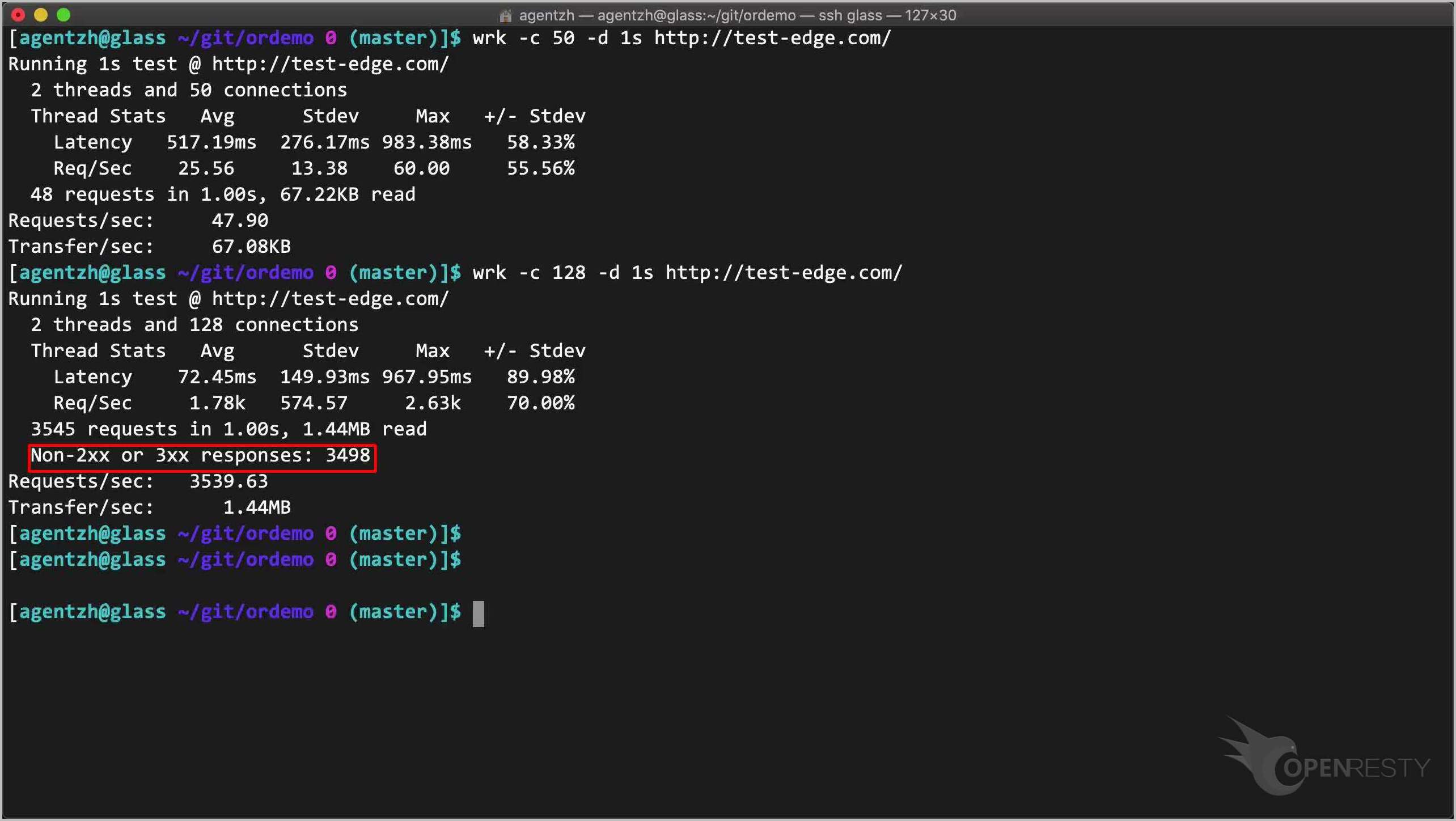

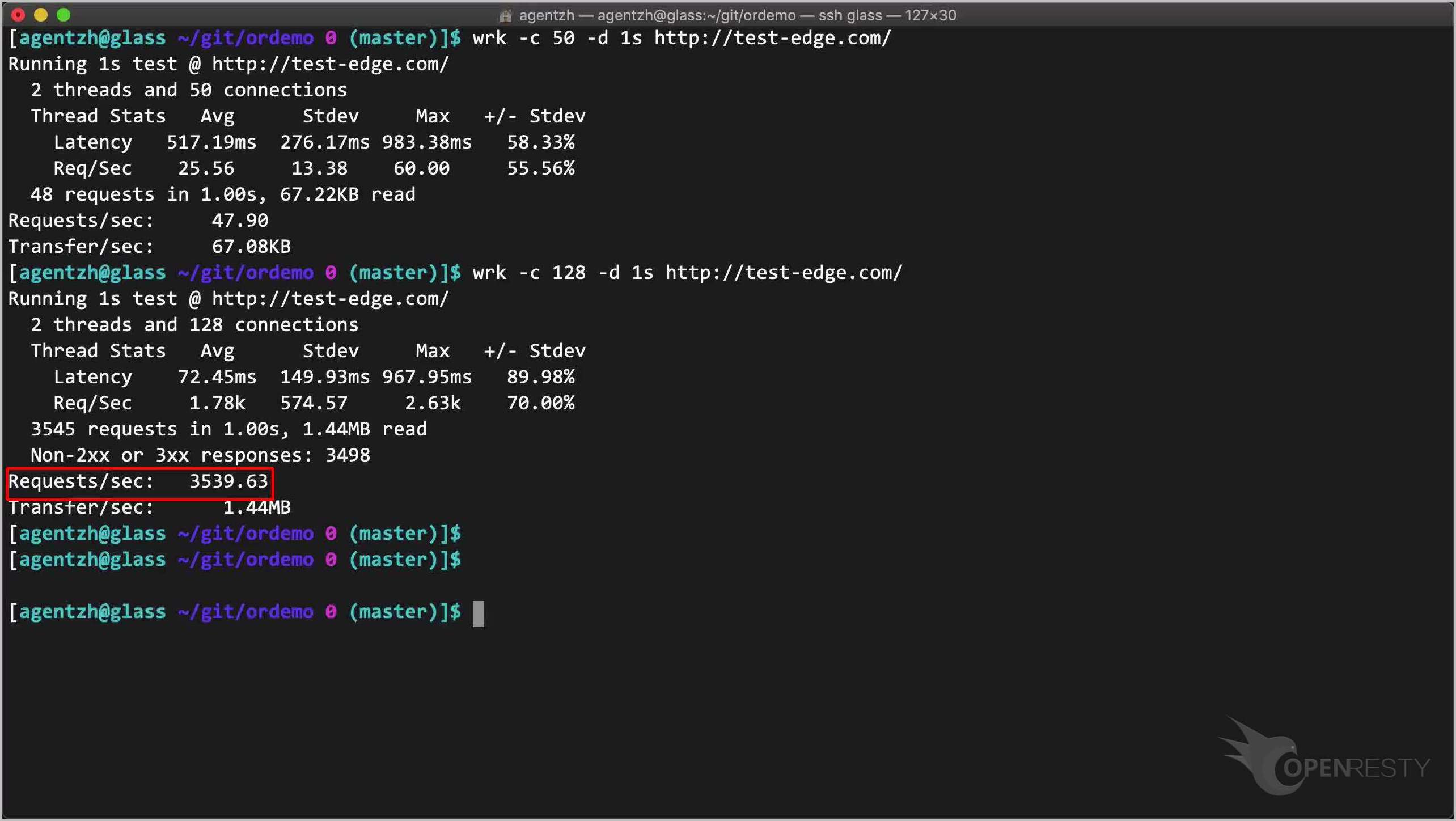

wrk -c 128 -d 1s http://test-edge.com/

Note the 128 concurrency level.

Run it! Note that there are a lot of rejected requests with erroneous responses.

The actual request rate is high this time, just because the server rejects those excessive requests very fast.

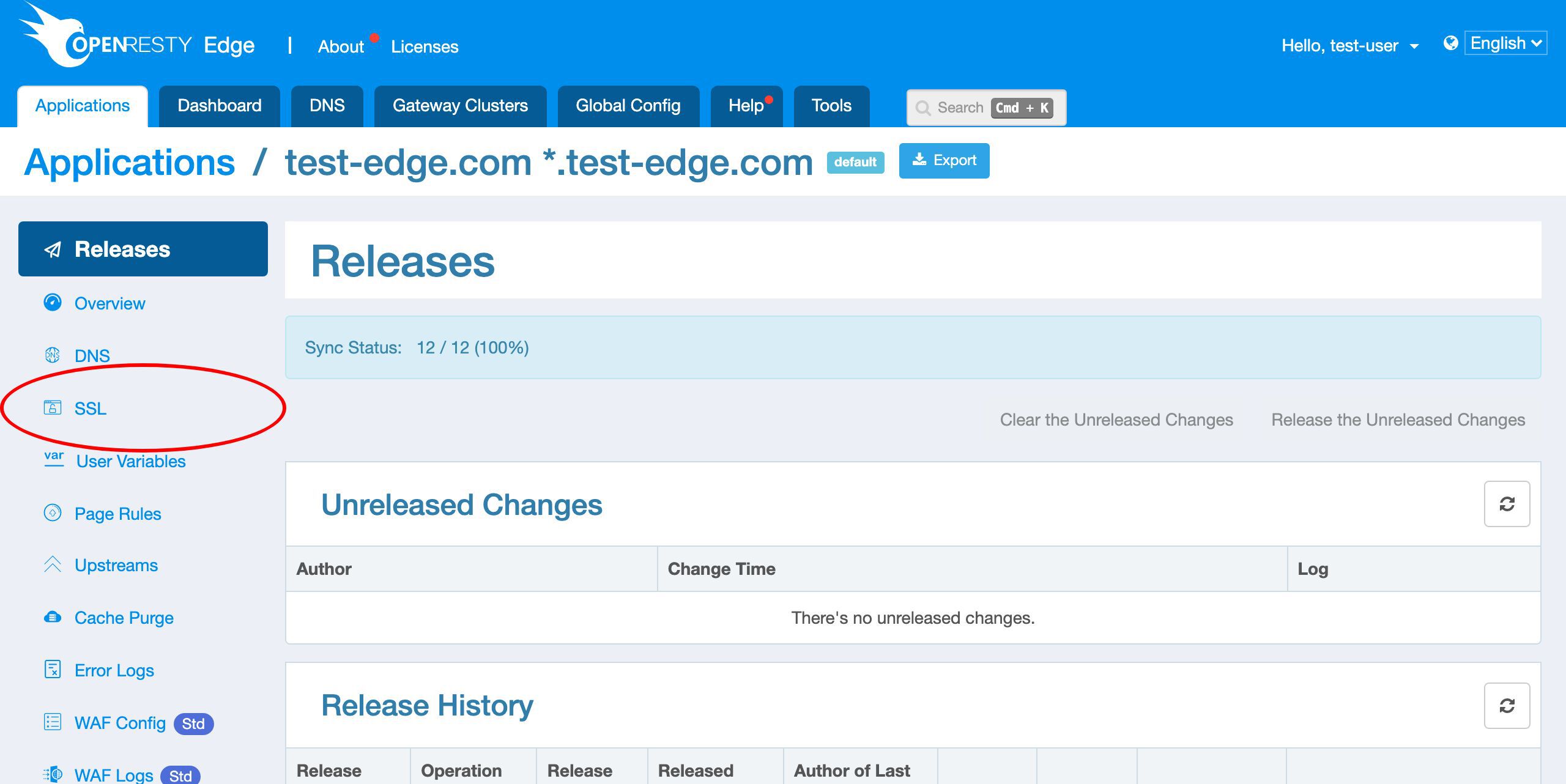

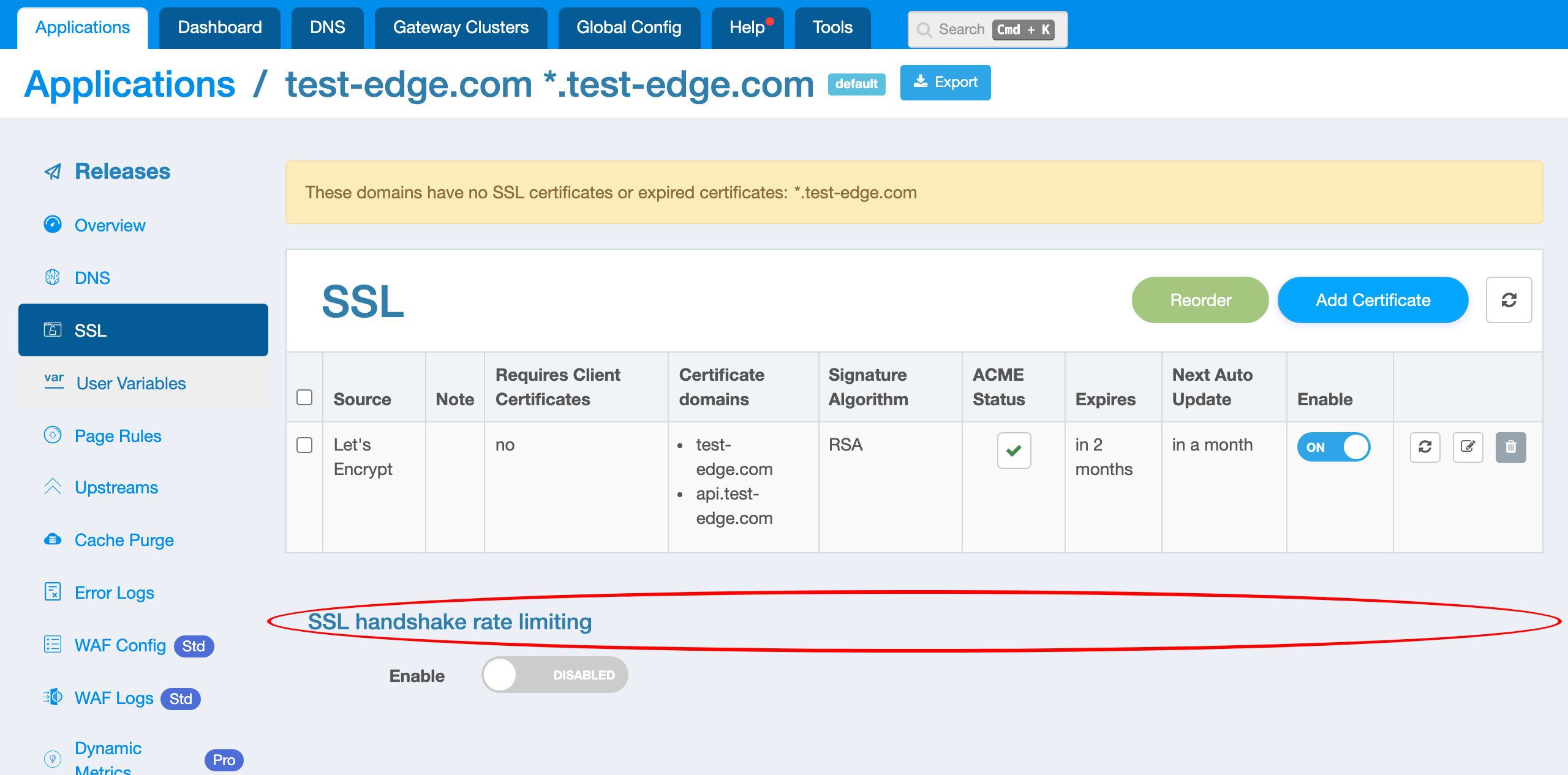

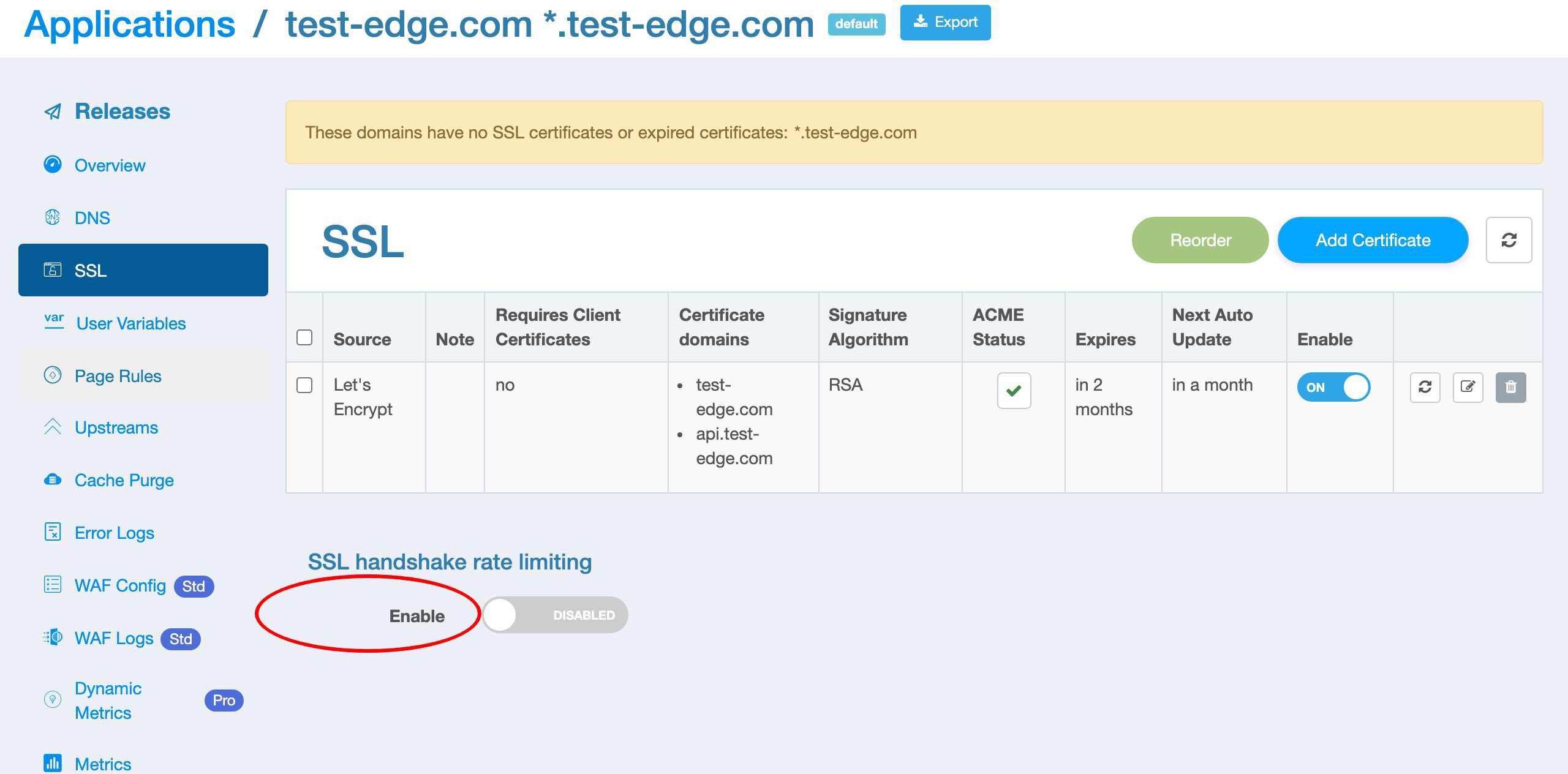

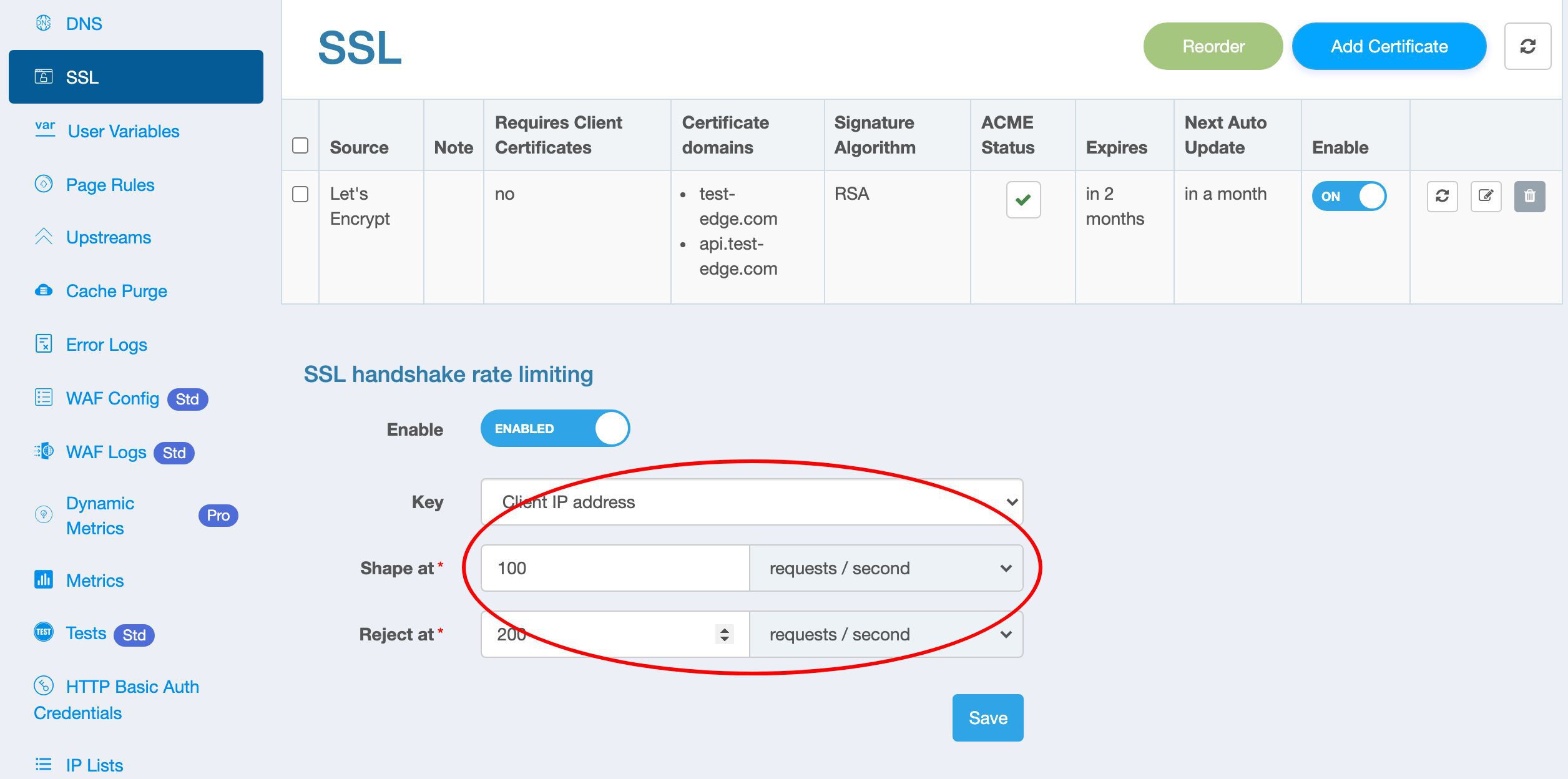

Limit the rate of SSL handshakes

In addition to limiting the rate of requests, OpenResty Edge can also limit the rate of SSL or TLS handshakes of HTTPS requests.

On this page, we can configure to limit the rate of SSL handshakes.

Let’s turn on the switch to see the configuration parameters.

These parameters are the same as the request rate limiting feature.

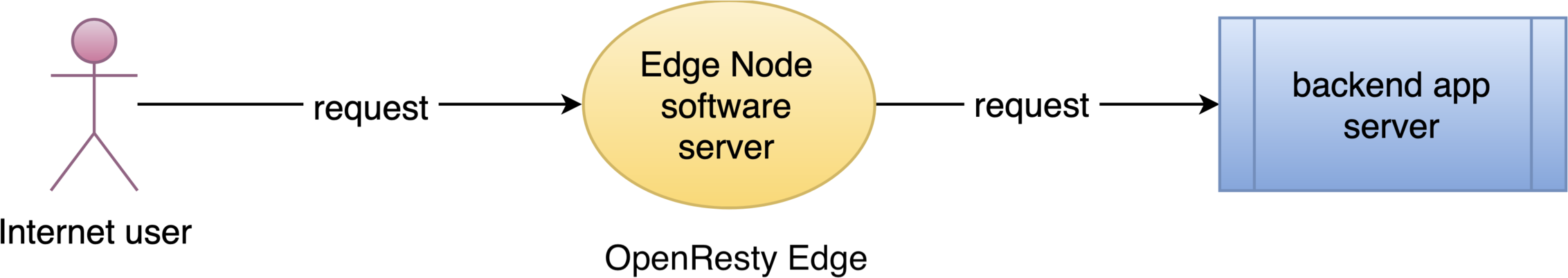

What is OpenResty Edge

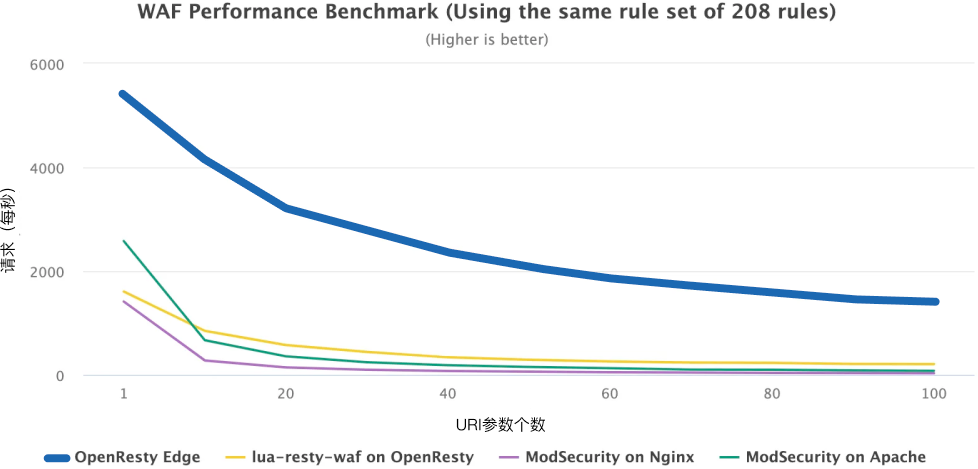

OpenResty Edge is our all-in-one gateway software for microservices and distributed traffic architectures. It combines traffic management, private CDN construction, API gateway, security, and more to help you easily build, manage, and protect modern applications. OpenResty Edge delivers industry-leading performance and scalability to meet the demanding needs of high concurrency, high load scenarios. It supports scheduling containerized application traffic such as K8s and manages massive domains, making it easy to meet the needs of large websites and complex applications.

If you like this tutorial, please subscribe to this blog site and/or our YouTube channel. Thank you!

About The Author

Yichun Zhang (Github handle: agentzh), is the original creator of the OpenResty® open-source project and the CEO of OpenResty Inc..

Yichun is one of the earliest advocates and leaders of “open-source technology”. He worked at many internationally renowned tech companies, such as Cloudflare, Yahoo!. He is a pioneer of “edge computing”, “dynamic tracing” and “machine coding”, with over 22 years of programming and 16 years of open source experience. Yichun is well-known in the open-source space as the project leader of OpenResty®, adopted by more than 40 million global website domains.

OpenResty Inc., the enterprise software start-up founded by Yichun in 2017, has customers from some of the biggest companies in the world. Its flagship product, OpenResty XRay, is a non-invasive profiling and troubleshooting tool that significantly enhances and utilizes dynamic tracing technology. And its OpenResty Edge product is a powerful distributed traffic management and private CDN software product.

As an avid open-source contributor, Yichun has contributed more than a million lines of code to numerous open-source projects, including Linux kernel, Nginx, LuaJIT, GDB, SystemTap, LLVM, Perl, etc. He has also authored more than 60 open-source software libraries.