Automating Kubernetes Gateway Node Lifecycle with OpenResty Edge

Every time a Kubernetes cluster triggers an autoscaling event, operations teams are rarely greeted with a traffic ingress that’s automatically ready to serve. Instead, they face a backlog of node approval requests — dozens of newly spun-up gateway instances sitting idle, waiting for manual sign-off before they can accept any traffic. Engineers who have worked with cloud-native architectures know this all too well: manual intervention doesn’t just add operational overhead; it introduces unpredictable delays precisely when automated failure recovery is needed most. This isn’t an execution-level mistake — it’s a fundamental flaw in the traditional gateway management model, which treats a static approval workflow as a prerequisite for system control. In a dynamically scheduled container environment, that human dependency becomes the single biggest bottleneck to availability. Configuration drift accumulates quietly in the background, engineering cycles get burned on repetitive node onboarding and state reconciliation, and the elasticity benefits that Kubernetes promises are steadily eroded by manual process overhead. This article examines three dimensions — node onboarding, multi-cluster management, and upstream layering — to show how OpenResty Edge eliminates this structural friction while staying fully aligned with Kubernetes.

The Gateway Bottleneck in the Kubernetes Era: When Static Management Models Collide with Dynamic Cloud-Native Environments

Kubernetes’s greatest promise is resilience and elasticity. Yet many enterprises, while reaping its benefits, run into an uncomfortable reality: the management model governing the gateway layer — the entry point for all traffic — remains rooted in the physical-machine era. This turns the gateway from a “guardian” into a bottleneck for the entire cloud-native stack.

Challenge 1: The “last mile” of elasticity. In Kubernetes, Pod scheduling, migration, restarts, and scaling are everyday occurrences. If every gateway node restart or scale-out requires manual approval from the operations team before the node can join the cluster, the elasticity that Kubernetes promises is effectively cancelled out by human process overhead. When fault self-healing kicks in or a traffic surge hits, a manual response window measured in minutes is precisely the most critical gap in service availability.

Challenge 2: Configuration entropy across multiple clusters. As businesses grow, enterprises inevitably end up with multiple Kubernetes clusters segmented by environment (dev/test/prod), region, or business unit. Without a unified control plane, gateway configurations, security policies, and routing rules across those clusters will drift apart over time. This inconsistency becomes a latent source of failures and a significant barrier to advanced capabilities such as cross-cluster disaster recovery and active-active architectures.

Challenge 3: Blurred ownership boundaries on shared platforms. When gateway configurations are co-maintained by a platform team and multiple business lines, the absence of clear scope boundaries means that a seemingly isolated change can ripple into another team’s upstreams or routes. During an incident, it becomes difficult to quickly answer: who was authorized to make the change, what exactly changed, and how far does the blast radius extend — driving up collaboration overhead and the risk of unintended side effects.

The root cause underlying all three challenges is the same: the systemic breakdown of management models designed for static infrastructure when applied to dynamic cloud-native environments. Because the gateway sits on the critical path of every request, an outdated management model there amplifies risk and propagates it across the entire service mesh.

To address these challenges, OpenResty Edge offers a composable, engineering-friendly path: automatic gateway node onboarding built on pre-established trust with Kubernetes; a single control plane that manages connections to multiple clusters and supports cross-cluster upstream aggregation; and clear ownership boundaries on the upstream side through a distinction between application-scoped and global Kubernetes upstreams. In addition, Edge Admin can watch Service and Endpoint resources within a cluster, syncing backend instance changes directly to upstreams — this service-discovery capability is fully independent of gateway node onboarding and can be adopted on its own or together with node onboarding, depending on your needs.

Capability One: Putting Elasticity into Practice — Automated Lifecycle Management of Gateway Nodes

The limitations of traditional solutions: The traditional gateway node onboarding workflow — new node starts → requests to join → administrator approval → joins cluster — is unsustainable in a Kubernetes environment. It traps K8s’s elasticity capabilities behind a manual-approval bottleneck. This is not merely an operational burden; it directly undermines the ROI of your K8s investment. Enterprises pay for elasticity, yet fail to fully realize its value because the gateway itself becomes a human bottleneck.

OpenResty Edge’s solution: K8s cluster binding for truly zero-intervention self-healing and scaling.

By binding a gateway cluster to one or more Kubernetes clusters in OpenResty Edge Admin, a trust relationship is established upfront. From that point on, whenever an OpenResty Edge Node is deployed within that K8s cluster, Admin periodically and automatically detects and approves all pending nodes — no human intervention required — and adds them to the corresponding gateway cluster.

┌─────────────────────────────────────────────────────----┐

│ K8s Cluster (Trust Relationship Established) │

│ │

│ Pod Start/Restart/Scale-out ──> Auto Register ──> Auto Approve ──> Join Gateway Cluster │

│ │

└────────────────────────────────────────────────────----─┘

- Self-healing recovery drops from minutes to seconds: When K8s restarts a failed Pod, the new instance automatically takes over traffic — no engineer involvement needed. This compresses mean time to recovery (MTTR) from minutes (dependent on an engineer’s response) down to seconds (handled entirely by the system).

- Unlock the full ROI of your elasticity investment: Gateway instances scaled out by HPA (Horizontal Pod Autoscaler) begin serving traffic immediately, making burst-traffic response truly automatic and ensuring your K8s elasticity investment pays off in full.

- Lower operational overhead: Free your operations team from the repetitive, low-value work of playing approval robots, so they can focus on higher-impact initiatives.

Capability Two: From Siloed Management to Unified Control — The Strategic Value of Multi-Kubernetes Cluster Governance

The limitations of traditional approaches: When dealing with a multi-cluster environment, the most intuitive solution is to deploy a separate gateway management stack for each cluster. This may seem straightforward, but it quietly sows the seeds of configuration drift. Routing rules, timeout settings, and security policies across clusters gradually diverge through routine iterations — a discrepancy that often goes unnoticed until an incident surfaces it: “The configuration looks identical — so why is the behavior different?” More critically, this siloed approach keeps cross-cluster traffic scheduling, active-active deployments, disaster recovery, and other strategic capabilities perpetually on the architecture diagram, never making it into production.

The OpenResty Edge approach: a unified control plane with cross-cluster upstream aggregation.

K8s cluster connection details are centrally registered and managed in the Admin console. Configurable fields include cluster name, API address, port, SSL verification options, and authentication token, with fine-grained timeout control across connect, read, and send phases.

Building on this foundation, the capability that truly unlocks the value of a multi-cluster setup is cross-cluster upstream aggregation: a single upstream can simultaneously incorporate service instances from multiple, distinct K8s clusters. This gives the gateway layer a global, cross-cluster view — enabling it to monitor service health across all clusters and perform unified traffic distribution and scheduling.

- Enabling strategic high-availability architectures: Cross-cluster upstream aggregation is the foundational infrastructure that turns architectures like active-active data centers, geo-redundant disaster recovery, and seamless cloud migration from plans into production reality. When a primary cluster goes down or requires maintenance, the gateway can automatically and gracefully redirect traffic to a standby cluster.

- Eliminating configuration drift at the source: A unified control plane means all clusters draw from a single source of truth for configuration — eliminating at the architectural level the class of hard-to-diagnose, latent failures that arise when “everything looks the same, yet nothing behaves the same.”

Capability Three: Aligning with Organizational Structure — The Design Philosophy of a Two-Tier Upstream System

New challenges at organizational scale: When a K8s platform evolves from serving a single team into shared infrastructure spanning multiple business lines, configuration management runs into a classic organizational friction point. Shared services owned by the platform team — such as authentication and logging — end up mixed in the same configuration system alongside backend services owned by individual business teams, with no clear boundaries of ownership. A seemingly routine “configuration cleanup” can accidentally take down another team’s service. The root cause of incidents like these is not whether engineers make mistakes — it’s whether the system design leaves room for those mistakes to happen.

The OpenResty Edge approach: application-level and global upstreams that map directly to team ownership.

OpenResty Edge recognizes the natural layering of upstream services in large organizations and introduces a two-tier upstream system that aligns technical architecture with organizational structure.

| Dimension | Application-Level K8s Upstream | Global K8s Upstream |

|---|---|---|

| Owner | Business team | Platform / infrastructure team |

| Scope | Bound to a specific application or domain | Shared globally across applications |

| Typical Use Cases | Dedicated backend services for a business application | Platform-wide shared services |

- Reduce cross-team friction: Clear ownership boundaries let platform teams and business teams safely manage their own configurations independently, without risk of stepping on each other.

- Improve platform stability: By encoding ownership into the architecture itself, the system structurally prevents configuration overwrites, accidental deletions, and other incidents that arise from multi-team collaboration — making the shared platform more robust.

- Architecture that mirrors your organization: A mature platform tool should reflect and streamline the way your organization actually collaborates. The two-tier upstream system is a direct expression of that principle.

Summary

When evaluating a gateway for Kubernetes, it is worth asking three questions beyond simply “can it be deployed inside the cluster”: whether dynamic scaling scenarios still require manual node approval; whether multiple clusters can maintain a predictable, consistent view of routes and upstreams under a single control plane; and whether the system provides clear scope boundaries when shared across teams, to limit the blast radius of accidental changes.

OpenResty Edge addresses these areas as follows: cluster binding and automatic approval align node lifecycle management with the scaling cadence of the underlying infrastructure; centralized registration and cross-cluster upstream aggregation ground multi-cluster traffic scheduling in an auditable global configuration; and application-level versus global Kubernetes upstreams map team ownership boundaries directly onto the configuration model. Orthogonal to all of these is Service/Endpoints-based upstream discovery, which can be enabled independently or combined with the above as business needs dictate.

For hands-on verification against the Admin UI, refer to two existing tutorials: Managing Traffic to Kubernetes (K8s) Upstreams in OpenResty Edge — covering global K8s registration, preparing a read-only Service Account Token for Admin, creating a Kubernetes upstream and referencing it in “page rules,” and confirming that Endpoint changes are reflected in the forwarding targets; and How to Implement Automatic Management of Gateway Servers in a Kubernetes Environment in OpenResty Edge — covering how to bind a target K8s cluster to the gateway cluster, enable automatic approval, deploy gateway nodes inside the cluster via a manifest, and verify that new Pod instances join the cluster without manual intervention after a Pod replacement. This article focuses solely on the questions raised and the boundaries of each capability; for specific operational steps and commands, consult the two tutorials directly.

What is OpenResty Edge

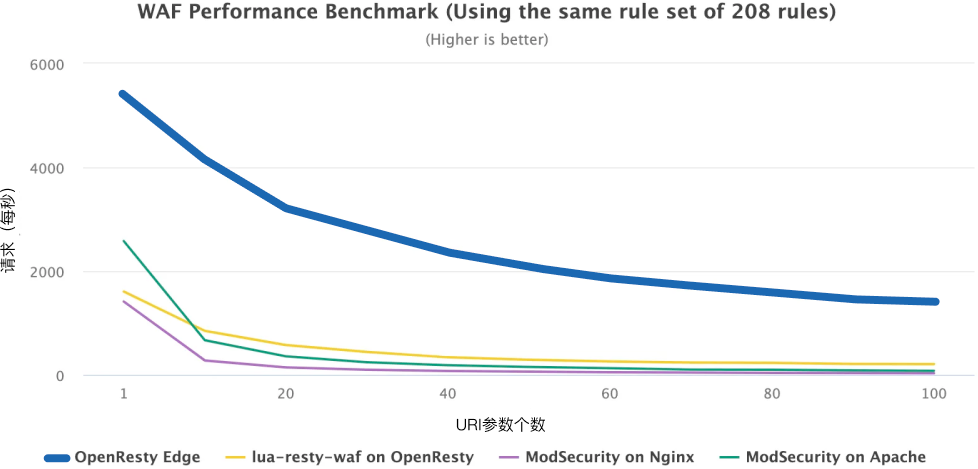

OpenResty Edge is our all-in-one gateway software for microservices and distributed traffic architectures. It combines traffic management, private CDN construction, API gateway, security, and more to help you easily build, manage, and protect modern applications. OpenResty Edge delivers industry-leading performance and scalability to meet the demanding needs of high concurrency, high load scenarios. It supports scheduling containerized application traffic such as K8s and manages massive domains, making it easy to meet the needs of large websites and complex applications.

About The Author

Yichun Zhang (Github handle: agentzh), is the original creator of the OpenResty® open-source project and the CEO of OpenResty Inc..

Yichun is one of the earliest advocates and leaders of “open-source technology”. He worked at many internationally renowned tech companies, such as Cloudflare, Yahoo!. He is a pioneer of “edge computing”, “dynamic tracing” and “machine coding”, with over 22 years of programming and 16 years of open source experience. Yichun is well-known in the open-source space as the project leader of OpenResty®, adopted by more than 40 million global website domains.

OpenResty Inc., the enterprise software start-up founded by Yichun in 2017, has customers from some of the biggest companies in the world. Its flagship product, OpenResty XRay, is a non-invasive profiling and troubleshooting tool that significantly enhances and utilizes dynamic tracing technology. And its OpenResty Edge product is a powerful distributed traffic management and private CDN software product.

As an avid open-source contributor, Yichun has contributed more than a million lines of code to numerous open-source projects, including Linux kernel, Nginx, LuaJIT, GDB, SystemTap, LLVM, Perl, etc. He has also authored more than 60 open-source software libraries.