Built-In DDoS Defense for OpenResty Edge—Without Another Appliance or a Second Ops Surface

OpenResty Edge ships with DDoS protection. It is not a standalone security product; it is part of the platform substrate and shares the same delivery and operations stack as the gateway, nodes, and Agents. Its core goal is—without compromising kernel integrity—to leverage XDP eBPF+ and fully dynamic complex defense policies that can evolve at runtime, embedding single-machine million-scale PPS protection directly in production. This article explains the design of that stack, its multi-tier defense model, and console capabilities. Before diving in, we briefly revisit the core engineering problem it is meant to solve.

1. The “impossible triangle” of DDoS defense

When DDoS defense moves from a perimeter bolt-on to part of the system hot path, engineering teams face a long-standing “impossible triangle”—three goals that are hard to satisfy at once:

- Peak throughput performance

- Kernel and ecosystem compatibility

- Long-term engineering maintainability

Existing approaches usually satisfy one or two, and pay in the third dimension.

1.1 Kernel-bypass style: high performance at the cost of system integrity

To reach extreme performance under high-PPS attacks, one family of designs bypasses the kernel network stack and pushes defense logic right next to the NIC. The performance problem is solved, but the price is:

- The network path splits into two separate stacks

- Existing monitoring, diagnostics, and operations tools stop working

- The defense system itself becomes a hard-to-touch, special-case component

A one-time performance optimization trades away long-term system complexity and operational risk.

1.2 Pure in-kernel: compatible but with a clear performance ceiling

Another family keeps defense strictly inside existing kernel frameworks—no change to the network architecture, no extra runtime, and full reuse of mature kernel mechanisms. That is easier to accept in engineering terms, but the processing path is fixed, and so is the performance ceiling.

Under truly high-frequency attacks, CPU quickly becomes the bottleneck; the defense stack may fail before the attack is fully identified.

1.3 Hybrid: plausible in theory, hard to tame in practice

In theory, the ideal is to place defense far enough forward without breaking system integrity. In practice, that path usually implies:

- Heavy dependence on OS versions and features

- Constrained programming models and execution environments

- Deploy, upgrade, and rollback procedures that resist standardization

Technically doable, but hard to replicate in engineering practice—and harder still to sustain for years at production scale. Once the system is stable, teams often keep their distance: nobody wants to touch a high-risk, high-maintenance core component.

2. OpenResty Edge: designing for all three goals

Our design philosophy is not about pushing the laws of physics; it is an engineering stance: without compromising kernel integrity, maximize use of the kernel’s cutting-edge data-path processing power. For each vertex of the impossible triangle, our principles answer as follows:

| Pain | Principle | How |

|---|---|---|

| Performance ceiling | High performance is the default, not the exception | Built on kernel data-plane capabilities such as XDP eBPF+, layered with fully dynamic complex defense policies; the system runs on the fastest path by default; you do not switch into a special mode that trades away compatibility just to handle an attack |

| System integrity | Native to the kernel, stay compatible | The system remains a standard Linux kernel—no bypass, no exclusive takeover; it stays fully compatible with the existing network stack and operations toolchain |

| Engineering maintainability | A production-grade engineering platform | Our in-house implementation stack provides a stable, manageable, programmable engineering environment—not merely exposing raw low-level kernel interfaces |

The question shifts from “can we go faster?” to whether single-machine million-scale PPS throughput together with fully dynamic complex defense policies can be deployed safely and reliably in production, and kept easy to manage and maintain.

2.1 “Four-tier joint defense”: from global baseline to a single NIC

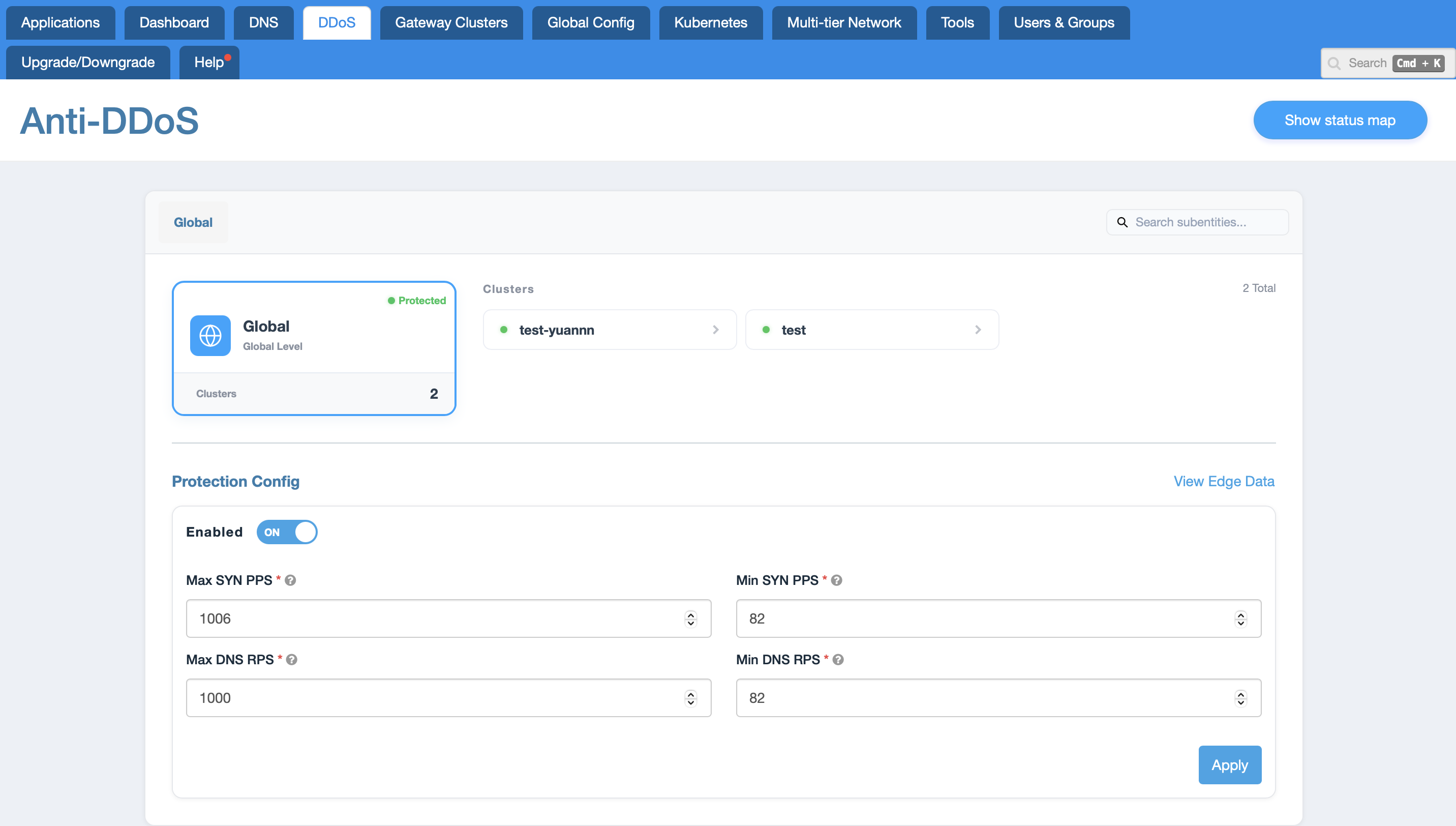

OpenResty Edge organizes defense settings into four top-down tiers aligned with real topology, so large enterprises can separate responsibilities and administrative domains:

- Global: Set default upper- and lower-bound baselines for SYN PPS, DNS RPS, and similar metrics across the entire Edge network—a safety net for all downstream entities. Newly attached clusters inherit this tier’s baseline protection until individually tuned.

- Cluster: Isolate by workload or geography—for example, tune only a test cluster (such as

test-yuannn) to match real traffic volume without affecting other clusters. - Node: Target hardening for a single machine in the cluster. For a relatively underpowered server or a node under targeted fire (e.g.

jenkins-slave-2), tighten or relax policy at this tier alone. - Interface: Push defense down to a single NIC. In hybrid cloud or containerized environments, apply limits to specific interfaces (such as the

cni0-style container NIC common in Kubernetes) to isolate and protect a slice of the microservice network.

2.2 Inheritance and override

When multiple tiers coexist, OpenResty Edge provides an inheritance mechanism to reduce duplicate configuration and misconfiguration risk:

- At tiers such as cluster or node, you can choose

Inheritto adopt the parent tier’s defense policy wholesale, without re-entering every field. - When a given node or interface needs an exception, switch it to modes such as

EnabledorDisabledto override—delivering the operational model of one global default, with local customization where needed.

2.3 Fine-grained SYN and DNS flood controls

At interface scope and in the corresponding configuration panels, you can exercise fine-grained control over attack vectors, for example:

- SYN flood: In addition to global rate limits (min/max PPS), configure SYN rate per IP (SYN/IP/s) and ACK rate per IP (ACK/IP/s), and monitor and rate-limit specific ports (e.g. port 80).

- DNS flood: Cap aggregate queries per second (RPS) and queries per IP (DNS/IP/s); limit where policies take effect using Domains and IPv4 ranges to curb amplification and abuse with spoofed sources.

- Auto detect attack mode: With one-click enablement, the system, informed by live traffic models, decides whether to enter a defense-active state—aligning with the fully automated attack–defense closed loop described later.

3. Performance is an engineering fact, not a slogan

Performance should be a quantifiable, reproducible engineering fact—not marketing copy.

3.1 Baseline performance and cost-effectiveness

On a modest cloud instance (e.g. AWS t3.small), without dedicated hardware or unusual system tuning, we have measured stable handling of roughly 170k–180k PPS under small-packet UDP/TCP SYN flood workloads.

That figure shows how optimizing the data path with XDP eBPF+ and attaching at the right hooks in the kernel yields very high performance density on commodity hardware, avoiding the traditional pattern of horizontal scale-out with many instances or buying expensive purpose-built appliances. Customer workloads still use the operating system kernel’s ordinary network protocol stack for normal traffic—unlike many NIC-centric bypass designs where applications or edge services abandon the kernel stack to chase mitigation throughput. The gain comes from architecture plus tight engineering integration with fully dynamic complex defense policies—not from dedicated hardware.

3.2 Why headroom matters

From observations of real attacks in production, typical attack traffic peaks around 30–40 Mbps. The configuration above sustains on the order of 100 Mbps (corresponding to roughly 180k PPS of small packets).

That gap—performance headroom—is essential. It means that during real attacks the system stays far below its processing ceiling, leaving ample buffer for monitoring, decision-making, and policy adjustment—predictable behavior and room to respond calmly.

3.3 Scalability

On higher-spec hardware, performance scales roughly linearly with CPU core count. In the lab we have validated single-machine throughput on the order of millions of PPS. This implies:

- Defense capacity can grow smoothly with hardware generations, protecting long-term investment.

- Even larger attacks call for incremental scaling, not a rip-and-replace architecture.

4. Automation is a prerequisite, not a perk

The effectiveness of DDoS defense depends not only on peak performance, but also on the speed and accuracy of response.

4.1 Fully automated attack–defense transitions

The system is designed to deliver a closed loop of automated defense:

- Automatically detect attack traffic patterns and activate defense policies.

- In the console, Auto detect attack mode can be enabled for specific tiers so the system judges and intervenes based on live traffic characteristics.

- When the attack ends, policies are rolled back automatically and normal paths resume.

This delivers measurable benefits: significantly lower MTTR (mean response time), and it removes the delay and false positives that manual steps can introduce—turning security response into deterministic system behavior.

4.2 Systematic policy decisions

The built-in traffic analytics engine distinguishes attack vectors (such as abusive hostname requests and CC-style floods) and automatically selects appropriate defenses. Its core value is this: moving the decision of whether to block and how to block from human, expert-driven firefighting to repeatable, system-embedded decision logic.

Defense is baked into the platform, reducing reliance on individuals and improving stability for the whole team.

4.3 Kernel-level malicious-IP bans: autonomous per-machine blocking, not hand-written iptables orchestration

Kernel-level banning of abusive source IPs is per-machine behavior: the deny list is not replicated cluster-wide as one identical global blacklist. On each machine, the Agent observes attacks as seen on that host; built-in platform logic automatically decides which source IPs to block and applies those rules in the local kernel. Different nodes can legitimately carry different blocked sets, depending on the attack surfaces each sees.

Meanwhile, policy is still centrally published from the Console: protection thresholds, toggles, and tier-wide orchestration still flow through Agents that automatically fetch changes, reconcile versions, and activate configuration. What this subsection clarifies is that exactly which IPs are blocked at any moment is decided on each machine from local telemetry—the Console does not push one fleet-wide blocklist.

That still contrasts with stacking iptables (or nftables) by hand: no SSH marathon to tweak chains, fragile one-off scripts, or runbooks that drift away from /etc; blocking decisions plus kernel enforcement close the loop locally inside the product, while the Console still provides unified observability and audit, versus the brittle model of manually curating firewall rules.

5. Observability: transparency builds trust

Any system on the core data path that behaves as a “black box” is itself a risk. Our design treats observability as a first-class concern.

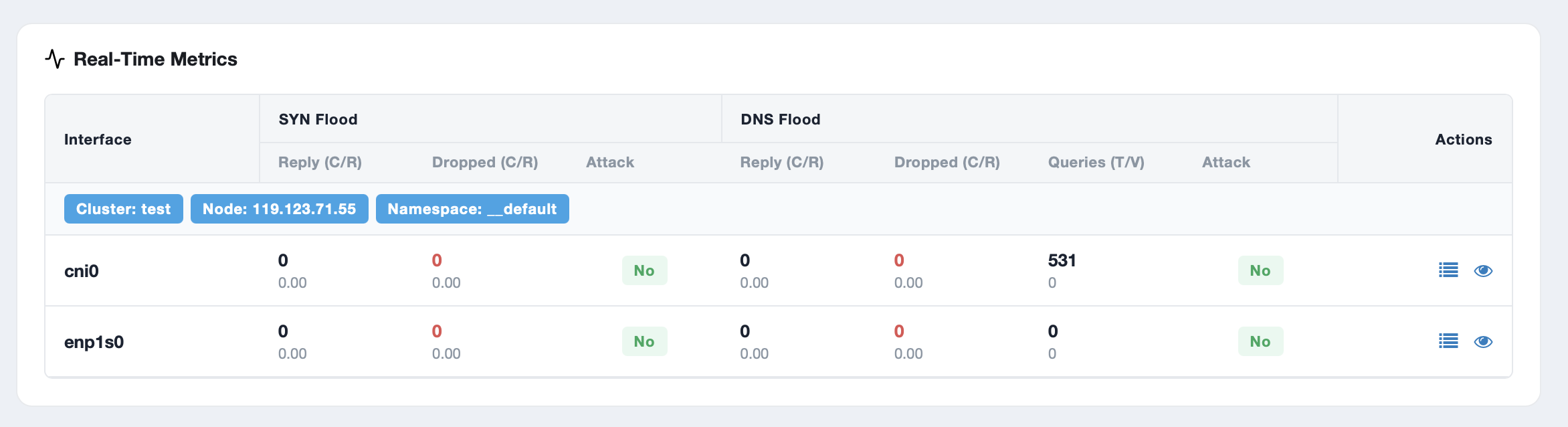

Real-time telemetry includes:

- Attack status (yes/no)

- Identified attack type

- Live and cumulative packet drop and pass-through counts

- Kernel-side implementation health and resource usage

Below each tier’s defense settings in OpenResty Edge, an embedded Real-Time Metrics panel lets you compare policy and impact in one view: SYN- and DNS-related traffic for the current tier, such as Reply pps (replies per second), Dropped pps (drops per second), and states like Not Under Attack / Under Attack—so mitigation effects are visible immediately, not hidden behind a black-box security module.

This data ensures:

- Auditable policies: every defensive action is backed by evidence.

- Traceable troubleshooting: a single, reliable factual basis for diagnosis.

- Cross-team alignment: security, networking, and business stakeholders share a common vocabulary of facts.

6. Conclusion: a built-in, adaptive defense stack

The scale and complexity of network attacks keep increasing. The real architectural risk is often not the attack itself, but the heterogeneous bolt-on introduced to counter it—something alien to the rest of the stack—or brittle processes that still depend heavily on humans at the worst moment. We are fully aware that a security system with punitive operational overhead will not be accepted by engineering and operations teams. Delivering multi-tier policies as OpenResty Edge built-in capabilities is how we meet performance, compatibility, and operability inside a single product—without stacking another standalone “DDoS box” or a parallel operations surface.

- Console + Agent: Configure all policies in a centralized Console; Agents on each node pull and synchronize via API—no logging into servers one by one for manual changes.

- Progressive adoption: Start with non-core workloads or a single node as a pilot, validate results, then roll out to more servers and business lines in a smooth, controlled way.

- Complex-network fit: With network-namespace support, it works well in containerized environments (e.g. Docker, Kubernetes) or multi-tenant virtualization, applying independent defense policies per network interface.

An ideal DDoS defense architecture is a capability that integrates seamlessly, runs autonomously, and is robust in its own right. After deployment, technical leaders should be able to trust it to run on its own—not to dominate the on-call roster as a constant high-priority alert source. That is the core engineering problem this architecture sets out to solve.

About The Author

Yichun Zhang (Github handle: agentzh), is the original creator of the OpenResty® open-source project and the CEO of OpenResty Inc..

Yichun is one of the earliest advocates and leaders of “open-source technology”. He worked at many internationally renowned tech companies, such as Cloudflare, Yahoo!. He is a pioneer of “edge computing”, “dynamic tracing” and “machine coding”, with over 22 years of programming and 16 years of open source experience. Yichun is well-known in the open-source space as the project leader of OpenResty®, adopted by more than 40 million global website domains.

OpenResty Inc., the enterprise software start-up founded by Yichun in 2017, has customers from some of the biggest companies in the world. Its flagship product, OpenResty XRay, is a non-invasive profiling and troubleshooting tool that significantly enhances and utilizes dynamic tracing technology. And its OpenResty Edge product is a powerful distributed traffic management and private CDN software product.

As an avid open-source contributor, Yichun has contributed more than a million lines of code to numerous open-source projects, including Linux kernel, Nginx, LuaJIT, GDB, SystemTap, LLVM, Perl, etc. He has also authored more than 60 open-source software libraries.